QUOKKA

Quadrilateral, Umbra-producing, Orthogonal, Kangaroo-conserving Kode for Astrophysics!

Quokka is a two-moment radiation hydrodynamics code that uses the piecewise-parabolic method, with AMR and subcycling in time. Runs on CPUs (MPI+vectorized) or NVIDIA GPUs (MPI+CUDA) with a single-source codebase. Written in C++20. (100% Fortran-free.)

Note

The Quokka methods paper is now available on arXiv.

Warning: Beta physics modules

The following modules should currently be treated as beta because they have not yet been exercised in a published science application with Quokka: MHD, radiation, dust, particles, chemistry, and self-gravity. See Known Issues and Errata for the current status and caveats.

We use the AMReX library (Zhang et al., 2019) to provide patch-based adaptive mesh functionality. We take advantage of the C++ loop abstractions in AMReX in order to run with high performance on either CPUs or GPUs.

Example simulation set-ups are included in the GitHub repository for many astrophysical problems of interest related to star formation and the interstellar medium.

Developer guides

Contact

All communication takes place on the Quokka GitHub repository. You can start a Discussion for technical support, or open an Issue for any bug reports.

About

Quokka riding a rocket

Quokka is a high-resolution shock capturing AMR radiation hydrodynamics code using the AMReX library (Zhang et al., 2019) to provide patch-based adaptive mesh functionality. We take advantage of the C++ loop abstractions in AMReX in order to run with high performance on either CPUs, NVIDIA GPUs, or AMD GPUs.

Physics module status

Per the documentation policy in the maintainer guide, physics features that have not yet been exercised in a published science application are marked as beta.

| Module | Status | Notes |

|---|---|---|

| Radiation | beta | Two-moment radiation transport and matter-radiation coupling |

| MHD | beta | Ideal MHD with constrained transport |

| Dust | beta | Dedicated dust dynamics and drag source terms |

| Particles | beta | Particle-mesh gravity, sink particles, star formation, and feedback |

| Chemistry | beta | Primordial chemistry source terms |

| Self-gravity | beta | Poisson solve for gas and particle mass |

Hydrodynamics and optically-thin cooling are not currently marked as beta.

See Known Issues and Errata for the current caveats and unsupported combinations.

Development methodology

The code is written in modern C++20, using MPI for distributed-memory parallelism, with the AMReX GPU abstraction compiling as either native CUDA code or native HIP code when GPU support is enabled.

We use a modern C++ development methodology, using CMake, CTest, and Doxygen. We use clang-format for automated code formatting, and clang-tidy and SonarCloud for static analysis, in order to audit code adherence to the ISO C++ Core Guidelines and the MISRA C/C++ guidelines. We additionally ensure the code is free of memory corruption bugs using Clang’s AddressSanitizer.

There is an automated suite of test problems that can be run using CTest. Each test problem has a validated solution against which it is compared (usually in L1 norm) in order to pass.

Code development is managed using pull requests (PRs) on GitHub. In an effort to ensure long-term code maintainability, all code must be written in C++20 following the Coding Guidelines, it must compile using Clang without warnings, all tests must pass, and the static analyzers must show zero new bugs before a pull request is merged with the main branch.

User assistance and bug reports are managed via Discussions and Issues in the GitHub repository.

Numerical methods

Hydrodynamics

The hydrodynamics solver is an unsplit method, using the piecewise parabolic method(Colella & Woodward, 1984) for reconstruction in the primitive variables, the HLLC Riemann solver (Toro, 2013) for flux computations, and a method-of-lines formulation for the time integration.

We use the method of (Miller & Colella, 2002) to reduce the order of reconstruction in zones where shocks are detected in order to suppress spurious oscillations in strong shocks.

Radiation

The radiation hydrodynamics formulation is based on the mixed-frame moment equations (e.g., (Mihalas & Mihalas, 1984)). The radiation subsystem is coupled to the hydrodynamic subsystem via operator splitting, with the hydrodynamic update computed first, followed by the radiation update, with the latter update including the source terms corresponding to the radiation four-force applied to both the radiation and hydrodynamic variables. A method-of-lines formulation is also used for the time integration, with the time integration done by the same integrator chosen for the hydrodynamic subsystem.

The hyperbolic radiation subsystem is solved using an unsplit method, using PPM for reconstruction of the moment variables, with fluxes computed via the HLL Riemann solver, with the wavespeeds computed using the ‘frozen Eddington factor’ approximation (Balsara, 1999), which is more robust than using the eigenvalues of the M1 system (Skinner & Ostriker, 2013) itself.

We reconstruct the energy density and the reduced flux \(f = F/cE\), in order to maintain the flux-limiting condition \(F \le cE\) in discontinuous and near-discontinuous radiation flows.

To ensure the correct behavior of the advection terms in the asymptotic diffusion limit (Lowrie & Morel, 2001), we modify the Riemann solver according to (Skinner et al., 2019). We use the Lorentz-factor local closure of (Levermore, 1984) to compute the variable Eddington tensor.

The source terms corresponding to matter-radiation energy exchange are solved implicitly with the method of (Howell & Greenough, 2003) following the hyperbolic subsystem update. The matter-radiation momentum update is likewise computed implicitly in order to maintain the correct behavior in the asymptotic diffusion limit (Skinner et al., 2019).

Equations

Fluids, radiation, magnetic fields, and dust

Assuming the speed of light is not reduced (\(\hat{c} = c\)), Quokka solves the following conservation laws for the cell-centered variables, as written below in the Heaviside–Lorentz system of units:

where the total fluid energy is

and the face-centered magnetic field is evolved according to the ideal MHD induction equation:

Quokka also solves the non-conservative auxiliary internal energy equation:

and the gravitational Poisson equation:

where

- \(\rho\) is the gas density,

- \(\vec{v}\) is the gas velocity,

- \(E\) is the total fluid energy density, including magnetic energy when MHD is enabled,

- \(\rho e_{\text{aux}}\) is the auxiliary gas internal energy density,

- \(X_n\) is the fractional concentration of species \(n\),

- \(\dot{X}_n\) is the chemical reaction term for species \(n\),

- \(\mathcal{H}\) is the optically-thin volumetric heating term (radiative and chemical),

- \(\mathcal{C}\) is the optically-thin volumetric cooling term (radiative and chemical),

- \(p(\rho, e)\) is the gas pressure derived from a general convex equation of state,

- \(\vec{B}\) is the magnetic field,

- \(\mathsf{I}\) is the identity tensor,

- \(E_g\) is the radiation energy density for group \(g\),

- \(F_g\) is the radiation flux for group \(g\),

- \(\mathsf{P}_g\) is the radiation pressure tensor for group \(g\),

- \(G_g\) is the radiation four-force \([G^0_g, \vec{G}_g]\) due to group \(g\),

- \(\Delta S_{\text{rad}}\) is the change in gas internal energy due to radiation over a timestep,

- \(\phi\) is the Newtonian gravitational potential,

- \(\vec{g}\) is the gravitational acceleration,

- \(\rho_i\) is the mass density due to particle \(i\),

- \(\rho_{\mathrm{d},k}\) is the dust mass density for dust species \(k\) (\(k \in [1, N_{\mathrm{dust}}]\)),

- \(\vec{v}_{\mathrm{d},k}\) is the dust velocity for dust species \(k\),

- \(T_{\mathrm{s},k}\) is the aerodynamic stopping time for dust species \(k\),

- \(\omega\) is the fraction of frictional heating deposited into the gas.

Note that since work done by radiation on the gas is included in the \(c \sum_g G^0_g\) term, \(S_{\text{rad}}\) is not the same as \(c \sum_g G^0_g\).

Collisionless particles

Quokka solves the following equation of motion for collisionless particles:

where \(\vec{x}_i\) is the position vector of particle \(i\).

Citation

If you use Quokka or numerical methods originally implemented for Quokka in your research, please cite (Wibking & Krumholz, 2022).

@ARTICLE{2022MNRAS.512.1430W,

author = {{Wibking}, Benjamin D. and {Krumholz}, Mark R.},

title = "{QUOKKA: a code for two-moment AMR radiation hydrodynamics on GPUs}",

journal = {\mnras},

keywords = {hydrodynamics, methods: numerical, Astrophysics - Instrumentation and Methods for Astrophysics},

year = 2022,

month = may,

volume = {512},

number = {1},

pages = {1430-1449},

doi = {10.1093/mnras/stac439},

archivePrefix = {arXiv},

eprint = {2110.01792},

primaryClass = {astro-ph.IM},

adsurl = {https://ui.adsabs.harvard.edu/abs/2022MNRAS.512.1430W},

adsnote = {Provided by the SAO/NASA Astrophysics Data System}

}

What to cite

Please also cite the module- or method-specific papers that apply to your work.

- Core Quokka hydro / AMR code: (Wibking & Krumholz, 2022)

- Radiation-hydrodynamics time integration: (He et al., 2024)

- Multigroup radiation-hydrodynamics: (He et al., 2024)

- Particle-mesh interactions: (He et al., 2026)

The following modules are currently marked as beta for science-use maturity in Quokka documentation: MHD, radiation, dust, particles, chemistry, and self-gravity. For beta capabilities, cite the relevant methods papers above when they exist, and report the exact Quokka version or commit hash used in your study.

Published scientific applications with Quokka

- Galactic outflows: (Vijayan et al., 2024), (Huang et al., 2025), (Vijayan et al., 2025)

Known Issues and Errata

This page collects current limitations, beta-status physics features, and any corrections to the published documentation or methods papers.

Beta physics modules

The following Quokka physics modules should currently be treated as beta because they have not yet been exercised in a published science application with Quokka:

| Module | Status | Notes | Documentation |

|---|---|---|---|

| Radiation | beta | Two-moment radiation transport and matter-radiation coupling | Equations, Radiation Integrator |

| Magnetohydrodynamics (MHD) | beta | Ideal MHD with constrained transport | MHD module |

| Dust | beta | Dedicated dust dynamics and dust-gas drag source terms | Dust module |

| Particles | beta | Particle-mesh gravity, sink particles, star formation, and feedback | Particles |

| Chemistry | beta | Primordial chemistry source terms | Equations, Runtime parameters |

| Self-gravity | beta | Poisson solve for gas and particle mass | Equations |

Hydrodynamics and optically-thin cooling are not currently marked as beta.

Current limitations

- Reflecting magnetic-field boundary conditions are not yet physically complete; Quokka currently applies

reflect_evento all magnetic-field components. MHD + radiationis not yet tested and is currently explicitly disabled in the code.MHD + dustis not exercised by an in-tree problem and should be treated as untested.- Dust currently neither contributes to, nor feels forces from, the gravitational potential.

Errata

No published errata are currently recorded on this page.

If you find a documentation error or a discrepancy between the documented equations and the implementation, please open an issue on the Quokka GitHub repository.

Glossary

Quick reference for recurring terminology across the Quokka documentation and reviews.

- PR (Pull Request): a proposed code change waiting for review.

- Revert: a follow-up change that undoes a previous merge to restore a known-good state.

- ADR (Architecture Decision Record): a short document capturing an important technical decision, options considered, and the rationale.

- CI (Continuous Integration): automated builds and tests that run on every change.

- GPU backend (CUDA/HIP): the selected GPU programming platform chosen at compile time.

- MPI: a standard for running a program across multiple processes (often across nodes).

- Unit test: a small test for a single function or module.

- Physics test (domain test): a small end-to-end run that checks scientifically meaningful results.

- Regression test: a test that ensures behavior hasn’t unintentionally changed compared to a known baseline.

- Benchmark: a timing or resource-usage measurement to track performance.

- Debug build / Release build: debug enables extra checks and slower safety features; release is optimized for speed.

- Stream (GPU): an ordered queue of GPU work; operations in a stream execute in sequence.

- Seed (random): a number used to initialize a random number generator so results can be repeated.

- Tolerance (absolute/relative): acceptable numerical error bounds (e.g., max difference allowed).

- Baseline: a trusted reference result used for comparison.

Magnetohydrodynamics Module

Warning: Beta feature

The MHD module has not yet been exercised in a published science application with Quokka and should currently be treated as beta.

The magnetohydrodynamics (MHD) module augments Quokka’s Euler solver with magnetic fields while keeping the discrete divergence of \(\vec{B}\) exactly zero. It is built on the same method-of-lines framework described in Equations, but introduces face- and edge-centred fields to support constrained transport.

Finite-volume discretization

- Cell-centred conserved variables (\(\rho\), \(\rho\vec{v}\), \(E_{\text{gas}}\) and

optional scalars) live in the same

MultiFabas the hydrodynamics module. Magnetic fluxes \(B_1\), \(B_2\), \(B_3\) are stored on face-centredMultiFabs so that each component is collocated with its normal face area. - Edge-centred electromotive forces (EMFs) are defined on a third staggered grid and assembled on demand. This staggered arrangement lets the induction update follow Stokes’ theorem exactly, ensuring \(\nabla \cdot \vec{B}=0\) to machine precision on uniform grids.

- Source terms (cooling, gravity, etc.) act on the cell-centred state. Since only ideal MHD is supported, there are no source terms in the magnetic induction equation.

Reconstruction and Riemann solves

Each sweep through computeHydroFluxes performs the following steps for every

coordinate direction:

- Convert conserved variables (plus face-centred magnetic fluxes) to primitive

variables with

HydroSystem::ConservedToPrimitive. - Build shock-flattening coefficients that detect strong compression and locally limit the reconstruction stencil to maintain monotonicity.

- Reconstruct left/right interface states using the requested order: donor-cell (1), piecewise linear with a minmod limiter (2), PPM (3) or extrema-preserving PPM (5, the default). The transverse magnetic components are reconstructed alongside the fluid primitives so that the Riemann solver sees a consistent state.

- Solve the one-dimensional Riemann problem with the HLLD solver. The normal magnetic field is taken directly from the face-centred data so that the solution respects the jump condition \(B_n^- = B_n^+\). The solver returns both fluxes for the conserved state and a face-centred velocity that is reused by particle advection and, optionally, by the EMF calculation.

If the high-order solve produces negative densities, the code marks the affected cells and later recomputes their update with a first-order upwind flux (see Fallbacks below). Negative pressures are corrected separately by the dual-energy synchronisation step.

Edge-centred electromotive forces

MHDSystem::ComputeEMF constructs either cell-centered or edge-centred EMFs \(\mathcal{E} = -\vec{v}\times\vec{B}\) by

combining face-centred magnetic fields with one of the three schemes selected by

emf_computinging_scheme:

FelkerStone2017– reconstructed velocities from cell-centered states to edges.Balsara2025– average of face-centered magnetic fields with cell-centered velocities, with subsequent EMF calculation at cell-center then reconstructed EMF to edgesQuokka2026- face-centered velocity returned by the Riemann solver reconstructed to edges

The stencil used in this reconstruction is controlled by emf_reconstruction_order

with the same order options as the flux reconstruction. After reconstruction,

Quokka averages the four quadrant-centred EMFs surrounding each edge using one of

two formulas selected by emf_averaging_scheme:

LondrilloDelZanna2004– the Londrillo & Del Zanna (2004) upwind constrained-transport formula, which weights the quadrants using characteristic MHD signal speeds.Balsara2025- EMF averaging with a dissapative term from wavespeeds and magnetic field jumps

During the LondrilloDelZanna2004 average, the code leverages the fast magnetosonic speeds computed at interfaces during the Riemann solve so that the EMF averaging is properly upwinded without requiring a full state reconstruction and eigenvalue computation.

Constrained-transport update

Once EMFs are available, MHDSystem::SolveInductionEqn updates the face-centred

magnetic fluxes using the area-integrated form of Faraday’s law. For a face with

normal direction \(w_0\) and transverse directions \(w_1\), \(w_2\) the update reads

Because the EMFs on opposite edges are equal and opposite, the discrete divergence, computed as a finite difference of the face-centred fluxes, remains unchanged by the update.

Time integration

The MHD module uses the same second-order strong stability preserving Runge–Kutta (SSPRK2) integrator that advances the hydrodynamics:

- Predictor stage: Evaluate numerical fluxes (and EMFs) from the state at

\(t^n\) and form a provisional update using

HydroSystem::PredictStep. - Corrector stage: Recompute fluxes/EMFs from the predictor state, average

them with the first-stage fluxes, and apply

HydroSystem::PredictStepa second time to obtain \(U^{n+1}\).

Strang-split source terms (chemistry, cooling, gravity) advance by half steps before and after the hydrodynamic RK stages. Radiation transport advances in a separate, fully operator-split solve after the hydrodynamic update.

Fallbacks and positivity control

- Cells flagged during reconstruction because the high-order state produced a negative density are re-updated with a first-order local Lax–Friedrichs flux and EMFs reconstructed with donor-cell stencils. This “first-order flux correction” preserves robustness while keeping the divergence constraint. (The dual-energy sync step is responsible for repairing negative pressures.)

- Density and temperature floors are enforced after every RK stage.

- When the dual-energy formalism is enabled, the auxiliary internal energy is synchronised with the magnetized total energy to avoid large truncation errors in the internal energy in high Mach number regions.

Runtime controls

The following input parameters tune the MHD discretization and are documented in more detail in Runtime parameters:

reconstruction_order– spatial order for hydro flux reconstruction (default 3 = PPM).emf_reconstruction_order– spatial order for the EMF reconstruction (default 5 = extrema-preserving PPM).emf_compute_scheme– chooseFelkerStone2017,Balsara2025, orQuokka2026for computing the emf either at cell-center or at the edge (defaultBalsara2025).emf_averaging_scheme– chooseLondrilloDelZanna2004orBalsara2025for edge averaging (defaultBalsara2025).artificial_viscosity_k– optional scalar viscosity coefficient that adds a diffusive flux to the momentum equations and can damp post-shock oscillations.quokka.bc– (required) chooseperiodicorreflectingfor the boundary conditions. For reflecting boundaries, we useamrex::BCType::reflect_evenfor all magnetic field components. Support for properly reflecting magnetic field boundaries will be added in the future.

Particles, star formation and feedback

Warning: Beta feature

The particles, star-formation, and feedback module has not yet been exercised in a published science application with Quokka and should currently be treated as beta.

All particle features can only be activated when compiled with -DAMReX_SPACEDIM=3.

Sink Particle Type

Sink particles carry the following real-valued attributes:

- Mass: Current particle mass (\(M\))

- Velocity: Three-dimensional velocity vector \((v_x, v_y, v_z)\)

Unlike StochasticStellarPop particles, sink particles have no evolution stage or birth/death metadata. They represent unresolved gravitationally-collapsed objects that accrete mass from the surrounding gas.

Sink Particle Formation

Overview

The sink formation module creates sink particles from gas cells that violate the Jeans criterion (Truelove et al. 1997). The algorithm is operator-split from the hydrodynamics and runs once per hydro timestep at the finest AMR level.

Formation criteria

A sink particle is created in a cell if all of the following conditions are met:

- Jeans instability: The cell density exceeds the local Jeans density,

where \(J = 0.25\) is the Jeans number and \(c_{\text{eff}} = c_s \sqrt{1 + 0.74 / \beta}\) is the effective sound speed accounting for magnetic pressure support (\(\beta = P_{\text{thermal}} / P_{\text{magnetic}}\)). For non-MHD simulations, \(\beta \to \infty\) and \(c_{\text{eff}} = c_s\).

-

Not in an existing accretion zone: The cell is not already being accreted by an existing sink particle (i.e., the accretion rate in the cell is zero).

-

Local density maximum: The cell has the highest density among all cells within a sphere of radius \(3 \Delta x\). This prevents multiple sink particles from forming in close proximity.

Mass and momentum assignment

When a sink particle forms, it receives the excess mass above the Jeans density:

The particle inherits the gas velocity of the parent cell. The cell density is then set to \(\rho_J\), and all conserved quantities (momentum, energy, internal energy) are scaled by the factor \(\rho_J / \rho\).

Practical considerations

- The local maximum check uses a non-strict inequality (\(\rho_{\text{cell}} \ge \rho_{\text{neighbor}}\)), so ties do not prevent formation. In practice, exact density ties only occur in artificial initial conditions.

- Sink formation is applied after sink accretion in each timestep, so newly formed particles do not overlap with existing accretion zones.

Sink Particle Accretion

Overview

Sink particle accretion follows the Bondi-Hoyle-Lyttleton prescription of Krumholz et al. (2004). Gas is removed from cells surrounding each sink particle and added to the particle’s mass, with the accretion rate set by the local gas properties and the particle mass.

Accretion rate

The Bondi-Hoyle radius is

where \(M\) is the particle mass, \(v_\infty\) is the gas velocity relative to the particle (mass-weighted mean over the accretion zone), and \(c_{f,\infty}\) is the fast magnetosonic speed. We take the upper bound of the fast speed (when the wave propagates perpendicular to the magnetic field): \(c_f^2 = c_s^2 + v_A^2 = c_s^2 (1 + 2/\beta)\). For non-MHD, this reduces to \(c_f = c_s\).

The accretion rate is

where \(\rho_\infty\) is the mean gas density in the accretion zone and \(\lambda = e^{3/2}/4\).

Accretion kernel

The accretion zone is a sphere of radius \(r_{\text{acc}} = 3 \Delta x\) centered on the particle. Within this zone, each cell receives a Gaussian weight:

where \(r\) is the distance from the particle to the cell centre. The kernel radius \(r_K\) is resolution-adaptive:

The accretion rate deposited in each cell is \(-\dot{M} , w_i / \sum_i w_i\) (negative means accreting). Optionally, a uniform kernel (\(w = 1\) for all cells in the \((7 \Delta x)^3\) stencil) can be used for testing by setting particles.sink_particle_use_uniform_kernel = 1.

Accretion limiters

Two corrections are applied to the per-cell accretion rate, in the following order:

-

Mass removal cap: No more than 25% of a cell’s mass may be removed in a single timestep (Krumholz et al. 2004). This prevents artificial sound waves from being launched by rapid density changes.

-

Jeans density floor: If the post-accretion cell density would still exceed the Jeans density \(\rho_J\), the accretion rate is increased so that the final density equals \(\rho_J\). This is safe because such cells are at the centre of highly supersonic convergence and are causally disconnected from their surroundings.

Momentum accretion

When gas is accreted, the particle gains both mass and momentum. The new particle velocity is computed from momentum conservation:

where \(\Delta m\) is the total accreted mass and \(\vec{v}{\text{gas}}\) is the mass-weighted gas velocity over the accreted cells. The gas state is then updated by scaling all conserved quantities by \((1 + \dot{M}{\text{cell}} \Delta t / (\rho V))\), which preserves the gas velocity field.

Galilean invariance

The accretion rate is computed using gas velocities in the particle frame (\(\vec{v}\infty = \vec{v}{\text{gas}} - \vec{v}_{\text{particle}}\)), ensuring Galilean invariance.

Runtime Parameters

| Parameter | Type | Default | Description |

|---|---|---|---|

particles.sink_particle_use_uniform_kernel | Boolean | 0 | Use uniform accretion kernel (for testing) |

Examples

ParticleSinkFormation Test

The ParticleSinkFormation test validates combined sink particle formation and accretion. The test checks:

- Exactly one star forms in the first timestep.

- Mass is conserved to machine precision during sink formation.

- Mass is conserved to machine precision during sink accretion.

ParticleSink Test

The ParticleSink test validates Bondi-Hoyle accretion and Galilean invariance. It runs in three phases:

- Base simulation: Runs with zero boost velocity and validates the density profile against an analytical solution.

- Boosted simulation: Runs with a boost velocity of \(10^8\) cm/s and verifies that the density profile matches the analytical solution, demonstrating Galilean invariance.

- Multi-timestep evolution: Continues the boosted simulation for additional timesteps and validates total mass conservation to machine precision.

StochasticStellarPop Particle Type

StochasticStellarPop particles carry the following real-valued attributes:

- Mass: Current particle mass (\(M_{\star}\))

- Velocity: Three-dimensional velocity vector \((v_x, v_y, v_z)\)

- Birth time: Simulation time when the particle formed

- Death time: When the particle explodes as a supernova

- Mass at birth: Initial mass before mass loss

- Luminosity: Radiation luminosity (if radiation is enabled)

Each particle also stores an integer evolution stage that tracks its lifecycle:

SNProgenitor: High-mass star (\(M > 8 M_{\odot}\)) that will explode as a supernovaSNRemnant: Compact remnant left after supernova explosionLowMassStar: Low-mass star that will not explode (not used in the current star formation implementation)LowMassComposite: Composite particle representing a population of low-mass stars

Star Formation

Overview

The star formation module adds star particles through a stochastic prescription that plugs into the Stochastic Stellar Population specialisation.

- Runs once per hydro timestep and evaluates each cell independently.

- Always spawns a

LowMassCompositeparticle when star formation is triggered. - Adds high-mass particles (\(> 8~M_{\odot}\)) probabilistically so the Chabrier (2005) initial mass function (IMF) is satisfied in expectation.

Jeans instability filter

Eligible cells are first identified through a Jeans-length check before any stochastic sampling occurs.

- Compute the Jeans density \(\rho_J = J^2 \pi c_{\text{eff}}^2 / (G \Delta x^2)\), where \(J = 0.25\) is the Jeans number and \(c_{\text{eff}} = c_s \sqrt{1 + 0.74 / \beta}\) is the effective sound speed, accounting for thermal pressure and magnetic pressure with \(\beta = P_{\text{thermal}} / P_{\text{magnetic}}\) (Mouschovias & Spitzer, 1976, ApJ, 210, 326; see also Myers et al, 2013, ApJ, 766, 97).

- Mark the cell as eligible when \(\rho > \rho_J\).

- Only eligible cells continue to the sampling steps below.

Controlling the formation rate

Two efficiency parameters tune how aggressively eligible gas is converted into star particles during each hydro step.

- \(\epsilon_{ff}\): efficiency per free-fall time, defined through \(\epsilon_{ff} = (\dot M_{\star} , t_{ff}) / (M_{cell} , \Delta t)\).

- \(\epsilon_{\star}\): fraction of the cell mass used when a particle is spawned.

- The target stellar mass for the step is \(\epsilon_{ff} M_{cell} (\Delta t / t_{ff})\).

- Bernoulli probability for spawning: \( P = \frac{\epsilon_{ff}}{\epsilon_{\star}} \frac{\Delta t}{t_{ff}} \).

- The expectation value \(\langle M_{\star} \rangle = P \epsilon_{\star} M_{cell}\) matches the target mass provided \(\Delta t < t_{ff}\); the CFL condition typically enforces that inequality.

Sampling the stellar population

Once a cell passes the filter and the Bernoulli draw succeeds, we construct the composite stellar population represented by the spawned particles.

- Every accepted draw creates one low-mass particle that represents all stars with \(M < 8 M_{\odot}\).

- High-mass stars follow the Chabrier (2005) IMF: log-normal below \(1 M_{\odot}\) and a slope of \(2.35\) above it.

- Pre-computed IMF integrals provide the mass fraction \(f_{\star,high}\) and mean mass \(\langle m \rangle_{\star,high}\) of the high-mass component.

- The expected number of massive stars is \(f_{\star,high} \epsilon_{\star} M_{cell} / \langle m \rangle_{\star,high}\); a Poisson variate with that mean sets the actual count.

- Each massive star draws its mass from the high-mass end of the IMF, while the low-mass particle retains the remaining fraction \(1 - f_{\star,high}\) of the spawned mass.

- If the Poisson draw returns zero, only the low-mass particle is inserted and it inherits the local gas velocity.

Assigning particle velocities

Sampled star particles receive velocities that combine the local bulk flow with an isotropic runaway kick.

- Each massive star draws a speed from a power-law distribution \(p(v) \propto v^{-1.8}\) truncated between 3 and 385 km s\(^{-1}\), then converts it to cgs units.

- A random direction is chosen by sampling \(\cos \theta\) uniformly in \([-1, 1]\) and \(\phi\) in \([0, 2\pi)\); the resulting kick is added to the gas velocity of the parent cell.

- The total momentum of all massive stars is accumulated, and the low-mass composite particle receives the opposite momentum so that the cell-level particle system conserves momentum.

Practical considerations

A few implementation notes help interpret corner cases and limitations of the current recipe.

- Star formation is operator-split from the hydrodynamics. When \(t_{ff}\) is unresolved (\(\Delta t \gtrsim t_{ff}\)), the true star formation rate is not captured, and this scheme provides one possible approximation; no explicit limiter is enforced beyond the CFL-controlled hydro step.

- All spawned particles are inserted at the cell centre. Other physics modules are responsible for any subsequent repositioning or feedback coupling.

Supernova Feedback

Overview

Quokka includes a particle framework for modeling stellar populations and their feedback effects on the interstellar medium (ISM). The primary feedback mechanism is supernova (SN) explosions, which inject energy and momentum into the surrounding gas. The SN module implements several physically-motivated schemes that adapt to the local resolution and density conditions.

Supernova Feedback

Physical Parameters

When a progenitor star reaches its death time, it explodes as a Type II supernova with the following canonical parameters:

| Parameter | Symbol | Value | Description |

|---|---|---|---|

| Blast energy | \(E_{\text{blast}}\) | \(10^{51}\) erg | Thermal energy injected into the ISM |

| Ejecta mass | \(m_{\text{ej}}\) | \(10 M_{\odot}\) | Mass expelled during explosion |

| Terminal momentum | \(p_{\text{snr},0}\) | \(2.8 \times 10^5 M_{\odot} , \text{km s}^{-1}\) | Asymptotic momentum of the SNR (configurable via particles.SN_p_term_Msunkmps) |

| Remnant mass | \(m_{\text{dead}}\) | \(\geq 1.4 M_{\odot}\) | Mass of the compact remnant |

| Kinetic energy | \(E_{\text{kin}}\) | \(0.5 m_{\text{ej}} v_{\text{star}}^2\) | Kinetic energy of the ejecta |

The terminal momentum is density-dependent and scales as:

where \(n_{\text{H}}\) is the ambient hydrogen number density averaged over the deposition kernel.

Deposition Kernel

SN feedback is deposited into a \((2 \times 3 + 1)^3 = 343\) cell cubic stencil centered on the particle’s location. The kernel weights are pre-computed to approximate a spherical distribution with radius \(r = 3 \Delta x\), where \(\Delta x\) is the cell width, around the host cell center. The kernel weights \(W_{ijk}\) are normalized such that \(\sum_{i,j,k} W_{ijk} = 1\).

Resolution-Adaptive Schemes

The feedback implementation uses the resolution factor \(R_M\) to determine whether the Sedov-Taylor phase is resolved:

where:

- \(M_{\text{SNR}} = M_{\text{gas}} + m_{\text{ej}}\) is the total SNR mass (gas in stencil plus ejecta)

- \(M_{\text{sf}} = 1679 M_{\odot} , n_{\text{H}}^{-0.26} \left(p_{\text{snr},0} / p_{\text{snr},0}^{\text{canonical}}\right)^2\) is the shell-formation mass, scaled so that the kinetic energy \(p_{\text{snr}}^2 / (2 M_{\text{sf}})\) is invariant when \(p_{\text{snr},0}\) is changed

When \(R_M < 1\), the Sedov-Taylor phase is resolved and the blast wave dynamics can be captured. When \(R_M \geq 1\), the resolution is insufficient and the code transitions to momentum-dominated feedback following Kim & Ostriker (2017).

Available Schemes

Quokka provides four SN feedback schemes controlled by particles.SN_scheme:

-

SN_thermal_only: Pure thermal energy injection- Deposits \(E_{\text{blast}} + E_{\text{kin}}\) into gas total energy

- Deposits ejecta mass \(m_{\text{ej}}\) and momentum \(m_{\text{ej}} \vec{v}_{\text{ej}}\) into gas momentum. No further momentum injection

- Simplest scheme, appropriate when Sedov-Taylor phase is well-resolved. Should only be used for testing.

-

Thermal and momentum schemes: The following schemes include resolution-dependent momentum injection. In these schemes, an energy of \(E_{\text{blast}}\) + \(E_{\text{kin}}\) is injected into the gas total energy, and a fraction \(f\) of the asymptotic momentum \(p_{\text{snr}}\) is injected as momentum: \(p_{\text{inject}} = f , p_{\text{snr}}\). The difference between the injected total energy and kinetic energy remains as thermal energy. Note that there is always some amount of thermal energy injected, even when \(f = 1\). The momentum fraction \(f\) is determined by the resolution factor \(R_M\) as follows:

a.

SN_thermal_or_thermal_momentum(default):- When \(R_M < 0.027\): Pure thermal (like

SN_thermal_only) with \(f = 0\) - When \(0.027 \leq R_M < 1\): Partial momentum injection with \(f = 0.529 \sqrt{R_M}\). Based on Kim & Ostriker (2017) calibration, \(f^2 = 0.28 R_M\) means that 28% of the total energy is kinetic energy in this case.

- When \(R_M \geq 1\): Full momentum injection with \(f = 1\)

b.

SN_thermal_kinetic_or_thermal_momentum:- When \(R_M < 0.027\): Pure kinetic injection with \(f = \sqrt{2 R_M}\)

- When \(0.027 \leq R_M < 1\): Partial momentum injection with \(f = 0.529 \sqrt{R_M}\)

- When \(R_M \geq 1\): Full momentum injection with \(f = 1\)

c.

SN_pure_kinetic_or_thermal_momentum:- Always injects full terminal momentum regardless of resolution with \(f = 1\)

- When \(R_M < 0.027\): Pure thermal (like

Momentum Deposition

For schemes with momentum injection, the following momentum deposition procedure guarantees Galilean invariance for a single SN event:

- Compute COM velocity of the SNR (gas + ejecta):

where \(\vec{P}_{\text{gas}}\) is the total momentum of gas in the stencil (kernel-weighted).

- Deposit momentum such that each cell receives:

where \(\vec{p}{\text{radial}} = f , p{\text{snr}} , W_{ijk} , \hat{\mathbf{r}}_{ijk}\) is the radial momentum component.

- Energy deposition includes a cross term:

The cross term accounts for the kinetic energy change from the radial expansion, ensuring Galilean invariance. This term sums to zero over all cells in the stencil, (momentum is conserved), ensuring energy conservation.

Background smoothing. To ensure the cross term cancels when summing over all cells in the stencil, the velocity field (but not the density) must be smoothed such that . In our tests, this smoothing also dramatically reduces peak velocities in SNR. In a shearing/turbulent background, this artificially homogenizes the gas velocity and changes kinetic energy unrelated to the SN. We provide a parameter particles.SN_smooth_gas_velocity=0 to turn off this smoothing: and . In this case, the cross term sums to a non-zero value: , where is the velocity of cell relative to the COM frame.

Runtime Parameters

| Parameter | Type | Default | Description |

|---|---|---|---|

particles.SN_scheme | String | SN_thermal_or_thermal_momentum | Feedback scheme (see above) |

particles.SN_p_term_Msunkmps | Float | 2.8e5 | Terminal momentum \(p_{\text{snr},0}\) in units of \(M_\odot,\mathrm{km,s}^{-1}\). The shell-formation mass \(M_\mathrm{sf}\) is scaled as \((p/p_\mathrm{canonical})^2\) to preserve the kinetic energy \(p^2/(2M_\mathrm{sf})\). |

particles.disable_SN_feedback | Boolean | 0 | Disable SN feedback entirely |

particles.verbose | Integer | 0 | Verbosity level for particle diagnostics |

particles.stellar_velocity_limit | Float | \(10^8\) cm/s | Maximum allowed stellar velocity (aborts if exceeded) |

particles.SN_smooth_gas_velocity | Integer | 1 | Smooth gas velocity in the stencil to enforce energy conservation |

Implementation Notes

Operator Splitting

SN feedback is operator-split from the hydrodynamics and applied after each timestep:

- Hydrodynamics advances the gas state by \(\Delta t\)

- Particle positions are updated by drift

- SN candidates (particles reaching death time) are identified

- Feedback is deposited into a temporary buffer

- Buffer is added to the state using a reproducibility-aware algorithm

- Particle evolution stage is updated to

SNRemnant

Numerical Reproducibility

The deposition uses a roundoff-resistant algorithm to ensure bit-for-bit reproducibility across different processor counts. The algorithm:

- Deposits feedback into a temporary buffer with a count field

- Communicates the buffer across ranks, which introduces roundoff errors due to non-associative floating-point arithmetic

- Removes low-order bits controlled by

particles.reproducibility_roundoff_redundancyto remove this error

AMR Considerations

- Feedback is always deposited at the finest level covering the particle

- If a particle sits at a coarse-fine boundary, feedback is deposited into fine-level cells (known limitation that needs to be fixed in the future)

- The reflux operation ensures conservation across AMR boundaries

- The kernel stencil size is fixed in cell widths, so the physical size adapts to local resolution

- The use of

setForceFinestLevel(true)is recommended to ensure that all SN progenitors, and thus SN events, exist at finest level.

Limitations

- Accurate radiative cooling must be present to resolve the pressure-confined snowplow phase

- The spherical kernel is approximated on a Cartesian grid, introducing mild anisotropy

- The terminal momentum formula assumes solar metallicity and Type II SNe physics

- No explicit treatment of metal enrichment (can be added via passive scalars)

Physical Motivation

The resolution-dependent momentum injection is based on the realization that under-resolved SN explosions lose energy to artificial radiative losses within a few timesteps. The momentum-based schemes compensate by directly injecting momentum when the Sedov-Taylor phase cannot be captured.

The terminal momentum \(p_{\text{snr}}\) represents the asymptotic momentum that a supernova remnant reaches in the pressure-confined snowplow phase, after most of the kinetic energy has been radiated away. This value (Kim & Ostriker 2017) is calibrated from high-resolution simulations and depends weakly on the ambient density.

The \(R_M\) factor effectively measures whether the simulation has sufficient resolution and density to capture the Sedov-Taylor expansion. When \(R_M \ll 1\), the blast wave will expand through many cells before reaching the shell-formation radius, allowing the hydrodynamics to naturally capture the momentum buildup. When \(R_M \sim 1\) or larger, the explosion is under-resolved and the code preemptively injects the expected terminal momentum.

Examples

Basic SN Test

The SN problem provides a canonical test with one SN progenitor in a uniform medium:

particles.SN_scheme = SN_thermal_or_thermal_momentum

problem.boost_vel_x = 1.0e8 # boost velocity in x direction

This test is run three times, with ambient density of \(10, 1.0\), and \(0.1 ~\mathrm{cm}^{-3}\), corresponding to a shell-formation radius of 7, 22, and 70 pc, respectively. The spatial resolution is 5 pc. The default SN_thermal_or_thermal_momentum scheme is used. The three ambient densities implies \(R_M \approx (3 \Delta x / R_{\text{sf}})^3 = 10, 0.3\), and \(0.01\), respectively, placing the scheme in the under-resolved, partially resolved, and well-resolved regimes, respectively.

In each of the three tests, the simulation is run twice, one initially at rest and one with an initial boost velocity of \(200\) km/s, to demonstrate Galilean invariance. The temperature and velocity profiles are identical in the boosted and rest frames (to some tolerance).

Random Blast Test

The RandomBlast problem provides a testbed for multiple SN explosions with various ambient conditions and bulk flows.

Dust Module

Warning: Beta feature

The dedicated dust dynamics module has not yet been exercised in a published science application with Quokka and should currently be treated as beta.

This module primarily implements two components: the dust transport term and the dust drag source term.

Equations for Gas-Dust System

where \(\omega\) controls the level of frictional heating, with \(\omega = 0\) turning it off and \(\omega = 1\) depositing all dissipation into the gas.

Variable Storage

The dust cell-centred conserved variables (\(\rho_{\mathrm{d}}\), \(\rho_{\mathrm{d}}\vec{v_{\mathrm{d}}}\)) are added to MultiFab.

Reconstruction and Riemann Solver

Dust reconstruction is performed together with gas using the same method. The Riemann Solver used is as follows:

In one dimension along the x-direction, given the left/right states \(W_d^{L/R}\), one can provide the Riemann flux for conserved variables as follows. The density flux reads (Huang & Bai 2022):

Similar expressions hold for the momentum flux for all directions.

This is implemented in src/dust/dustRiemannSolver.hpp and called in DustSystem::ComputeDustFluxes to compute the dust advection flux.

Time Integrator

A Strang-split method (Tedeschi-Prades et al. 2025) is used to integrate the dust-gas system. The Strang-split update can be expressed as:

where \(\mathcal{D}\) is the dust-gas drag operator and \(\mathcal{H}\) is the hydrodynamics operator (including both gas and dust transport). The hydrodynamics operator \(\mathcal{H}\) is handled using the explicit RK2 scheme. The drag operator \(\mathcal{D}\) is implemented in src/dust/DustDrag.hpp and called in QuokkaSimulation::addStrangSplitSourcesWithBuiltin via DustDrag::computeDustDrag.

Optional Picard iteration for dust–gas drag

Users may optionally enable Picard iteration for the dust–gas drag operator \(\mathcal{D}\). When the stopping time depends on the gas or dust velocity, enabling iteration is required to maintain an implicit dust drag update. See Runtime parameters for details.

User-defined dust stopping time

For a given problem, users must define a problem-specific dust stopping time by implementing the DustDrag::ComputeReciprocalStoppingTime function (note that this function should return the reciprocal of the stopping time). An example can be found in the src/problems/DustDamping test.

Also, users can directly use the dust stopping time calculation helper DustDrag::ComputeReciprocalStoppingTimeKwok to compute the physical dust stopping time, following Kwok (1975) with an optional supersonic correction. The stopping time of dust \(t_{\mathrm{s}}\) is given by:

When \(\gamma=1\), this expression reduces exactly to the isothermal \(t_s\). An example of its usage can be found in the src/problems/DustDampingCorrection test.

CFL Condition for Dust

For the dust-gas coupled system with N dust species, we use the following CFL condition:

Runtime Controls

The following input parameters tune the dust module and are documented in more detail in Runtime parameters:

enable_iter_stoptime– switch of iterative dust stopping time calculation.omega– controls the level of frictional heating.print_iteration_counts- switch to turn on/off printing of dust drag iteration counts for debugging.dust.density_floor- the minimum dust density value allowed in the simulation.

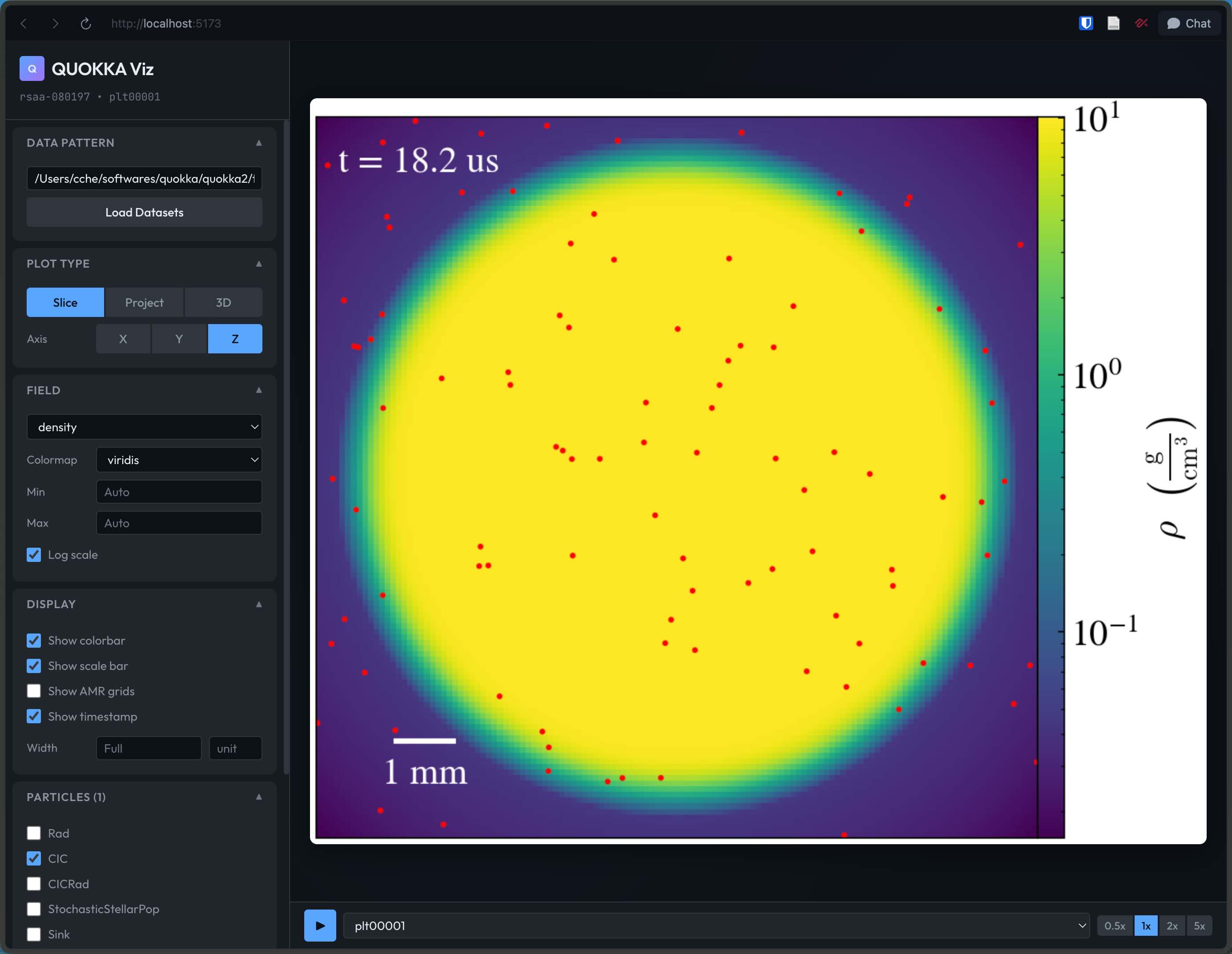

Gallery

AGORA disk galaxy

We simulated a Milky Way-mass disk galaxy with the AGORA initial conditions using stellar feedback from individual massive stars:

Credit: Ben Wibking / Quokka Development Team

QED III

QED is a suite of 3D hydrodynamic simulations which follow supernova-driven outflows in varying galactic environments. The QED follows outflows from a \(1\) kpc\(^2\) patch of galaxy up to a vertical height of \(\sim\) few kpc. In the third of a series of QED papers, found here, we explore how outflow properties of the outflows depend on three galactic environments: Solar neighbourhood, inner, and outer galaxy.

Solar Neighbourhood

The initial gas density and temperature profiles are derived using Solar neighbourhood gas surface density, \(13 M_{\odot}\) pc\(^{-2}\). The SN rate is \(6\times 10^{-5}\) yr\(^{-1}\) and the gas cools with Solar (top) and sub-Solar (bottom) metallicity. Though box height (\(8\) kpc) and the resolution (\(2\) pc, uniform) are the same for both the metallicities, the nature of outflows in these two cases is quite different.

The Solar metallicity case:

The sub-Solar metallicity case:

Inner Galaxy

The initial gas surface density is \(50 M_{\odot}\) pc\(^{-2}\) and the SN rate is commensurately higher, \(3\times 10^{-4}\) yr\(^{-1}\). The domain size and resolution are identical to the Solar neighbourhood case, but note how the evolution for gas cooling at Solar (top) and sub-Solar (bottom) metallicities are closer in nature than the for the Solar neighbourhood case.

The Solar metallicity case:

The sub-Solar metallicity case:

Outer Galaxy

The intial gas surface density for these runs is \(2.5 M_{\odot}\) pc\(^{-2}\) for the Outer galaxy cases. Because the inital gas surface density is lower, the gas scale height of the warm phase is much larger. To accomodate the larger gas scale height the box size is increased to \(16\) kpc in the vertical direction. The SNe go off in a relatively high density surrounding medium arresting development of large scale outflows. The mass loading factor is small.

The Solar-metallicity case looks like this:

The sub-Solar metallicity outflows look like this:

Zoom-in

\(1\) kpc zoom-in of the Solar neighbourhood case shows the spectacular rise and fall of clouds in the Solar neighbourhood!

In the Inner galaxy, the zoom-in shows how the disc seems to be breathing in and out!

QED I

The first QED paper, link here, focuses on the metal loading properties of galactic winds launched from the Solar Neighbourhood. Initially, the galaxy is metal-free. As supernovae feedback launches outflows from the galaxy, the multiphase outflows are loaded differentially with metals. The hot phase carries most of the metals while the cooler gas, which is entrained from the disc, is metal-poor. The following movie shows midplane slices of density (top), oxygen mass density (middle), and metallicity (bottom).

Installation

Building on Linux and macOS

This instruction works on both Linux and macOS. If you come across any issues on macOS, see Installation on macOS for detailed instructions.

To run Quokka, download this repository and its submodules to your local machine:

git clone --recursive https://github.com/quokka-astro/quokka.git

Quokka uses CMake (and optionally, Ninja) as its build system. If you don’t have CMake and Ninja installed, the easiest way to install them is to run:

python3 -m pip install cmake ninja --user

Alternatively, if you have uv installed, you can use:

uv pip install cmake ninja

Now that CMake is installed, create a build/ subdirectory and compile Quokka, as shown below.

cd quokka

mkdir build; cd build

cmake .. -DCMAKE_BUILD_TYPE=Release -G Ninja

ninja -j6

Congratuations! You have now built all of the 1D test problems on CPU. You can run the automated test suite:

ninja test

You should see output that indicates all tests have passed, like this:

100% tests passed, 0 tests failed out of 20

Total Test time (real) = 111.74 sec

To run in 2D or 3D, build with the -DAMReX_SPACEDIM CMake option, for example:

cmake .. -DCMAKE_BUILD_TYPE=Release -DAMReX_SPACEDIM=3 -G Ninja

ninja -j6

to compile Quokka for 3D problems.

By default, Quokka compiles itself only for CPUs. If you want to run Quokka on GPUs, see the section “Running on GPUs” below.

Have fun!

Building with CMake + make

If you are unable to install Ninja, you can instead use CMake with the Makefile generator, which should produce identical results but is slower:

cmake .. -DCMAKE_BUILD_TYPE=Release -G "Unix Makefiles"

make -j6

make test

Could NOT find Python error

If CMake prints an error saying that Python could not be found, e.g.:

-- Could NOT find Python (missing: Python_EXECUTABLE Python_INCLUDE_DIRS Python_LIBRARIES Python_NumPy_INCLUDE_DIRS Interpreter Development NumPy Development.Module Development.Embed)

you should be able to fix this by installing NumPy (and matplotlib) by running

python3 -m pip install numpy matplotlib --user

or with uv:

uv pip install numpy matplotlib

This should enable CMake to find the NumPy header files that are needed to successfully compile.

Alternatively, you can work around this problem by disabling Python support. Python and NumPy are only used to plot the results of some test problems, so this does not otherwise affect Quokka’s functionality. Add the option

-DQUOKKA_PYTHON=OFF

to the CMake command-line options (or change the QUOKKA_PYTHON option to OFF in CMakeLists.txt).

Running on GPUs

By default, Quokka compiles itself to run only on CPUs. Quokka can run on either NVIDIA or AMD GPUs. Consult the sub-sections below for the build instructions for a given GPU vendor.

NVIDIA GPUs

If you want to run on NVIDIA GPUs, re-build Quokka as shown below. (CUDA >= 11.7 is required. Quokka is only supported on Volta V100 GPUs or newer models. Your MPI library must support CUDA-aware MPI.)

cmake .. -DCMAKE_BUILD_TYPE=Release -DAMReX_GPU_BACKEND=CUDA -DAMReX_SPACEDIM=3 -G Ninja

ninja -j6

All GPUs on a node must be visible from each MPI rank on the node for efficient GPU-aware MPI communication to take place via CUDA IPC. When using the SLURM job scheduler, this means that --gpu-bind should be set to none.

The compiled test problems are in the test problem subdirectories in build/src/. Example scripts for running Quokka on compute clusters are in the scripts/ subdirectory.

Note that 1D problems can run very slowly on GPUs due to a lack of sufficient parallelism. To run the test suite in a reasonable amount of time, you may wish to exclude the matter-energy exchange tests, e.g.:

ctest -E "MatterEnergyExchange*"

which should end with output similar to the following:

100% tests passed, 0 tests failed out of 18

Total Test time (real) = 353.77 sec

AMD GPUs

Requires ROCm 6.3.0 or newer. The directory containing the HIP and other related binaries must be added to the

PATHenvironment variable after the ROCm installation.

Build with -DAMReX_GPU_BACKEND=HIP. Your MPI library must support GPU-aware MPI for AMD GPUs. The typical AMD GPU compilers are amdclang++ or hipcc. In case your GPU-aware compiler is not being used by default during the build, use the DCMAKE_CXX_COMPILER and DCMAKE_C_COMPILER options to specify the C++ and C compilers respectively. Additionally, the AMD GPU architecture may have to be specified. This can be done using the DAMReX_GPU_ARCH option. The GPU architecture can be found using

rocminfo | grep gfx

A typical build command using the amdclang++ compiler and an AMD GPU with RDNA 2 (gfx1031) architecture will look like

cmake .. -DCMAKE_BUILD_TYPE=Release \

-DCMAKE_CXX_COMPILER=amdclang++ \

-DCMAKE_C_COMPILER=amdclang \

-DAMReX_GPU_BACKEND=HIP \

-DAMReX_GPU_ARCH=gfx1031 \

-G Ninja

Quokka has been tested on MI100, MI250X and 6700XT GPUs.

Intel GPUs (does not compile)

Due to limitations in the Intel GPU programming model, Quokka currently cannot be compiled for Intel GPUs. (See https://github.com/quokka-astro/quokka/issues/619 for the technical details.)

Building a specific test problem

By default, all available test problems will be compiled. If you only want to build a specific problem, you can list all of the available CMake targets:

cmake --build . --target help

and then build the problem of interest:

ninja -j6 test_hydro3d_blast

Building on macOS

This guide provides detailed instructions for building Quokka on macOS systems.

Prerequisites

Before installing Quokka, you need to ensure that you have a working C++ compiler, MPI library, CMake, Ninja, and HDF5 installed on your system.

Step 1: Verify C++ Compiler

First, check if you have a working C++ compiler installed by compiling a simple program:

cat > /tmp/cpp.cpp <<'EOF'

#include <iostream>

int main(){ std::cout << "C++ works\n"; }

EOF

clang++ /tmp/cpp.cpp -o /tmp/cpp && /tmp/cpp

If this command succeeds and prints “C++ works”, you’re good to go. If not, you’ll need to install Xcode Command Line Tools:

xcode-select --install

Follow the prompts to complete the installation, then verify C++ works again using the test above.

Step 2: Verify and Install MPI

Check if MPI is already installed:

mpicxx --version

If MPI is not installed, install it using Homebrew:

# Install Homebrew if you don't have it

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"

# Install Open MPI

brew install open-mpi

After installation, verify that MPI works correctly:

mpicxx --version

mpicxx --show

cat > /tmp/mpi_cpp.cpp <<'EOF'

#include <mpi.h>

#include <iostream>

int main(int argc,char**argv){

MPI_Init(&argc,&argv);

int r; MPI_Comm_rank(MPI_COMM_WORLD,&r);

std::cout<<"Hello from C++ rank "<<r<<"\n";

MPI_Finalize();

return 0;

}

EOF

mpicxx /tmp/mpi_cpp.cpp -o /tmp/mpi_cpp && /tmp/mpi_cpp

This should compile and run successfully, printing “Hello from C++ rank 0”.

Step 3: Install CMake and Ninja

You can install CMake and Ninja using either pip or Homebrew.

Option 1: Install via pip or uv

Using pip:

python3 -m pip install cmake ninja --user

Using uv (if you have uv installed):

uv tool install cmake ninja

Note: For uv, you may also use uv pip install cmake ninja if you’re working within a virtual environment.

Option 2: Install via Homebrew

brew install cmake ninja

Verify the installation:

cmake --version

ninja --version

Step 4: Install HDF5 (Required)

HDF5 is required to build Quokka. On macOS, we recommend installing it with Homebrew:

brew install hdf5

Step 5: Install Python Dependencies (Optional but Recommended)

Some test problems use Python for plotting results. Install NumPy and matplotlib:

Using pip:

python3 -m pip install numpy matplotlib --user

Using uv:

uv pip install numpy matplotlib

Set PYTHONPATH to the site-packages directory of your Python environment. For example:

export PYTHONPATH=~/softwares/quokka/.venv/lib/python3.14/site-packages

If PYTHONPATH is unset or points to the wrong environment, post-processing imports may fail at runtime (for example ModuleNotFoundError: No module named 'numpy' or ImportError: numpy._core.multiarray failed to import), and tests such as RadDust can abort when loading matplotlib.

If you skip this step, you can disable Python support later by adding -DQUOKKA_PYTHON=OFF to the CMake configuration.

Building Quokka

Continue with the instructions in Building on Linux and macOS.

Troubleshooting

MPI Compiler Issues

If you encounter issues with the MPI compiler, you can explicitly specify it:

cmake .. -DCMAKE_CXX_COMPILER=mpicxx -DCMAKE_C_COMPILER=mpicc -DCMAKE_BUILD_TYPE=Release -G Ninja

Running on HPC clusters

Instructions for running on various HPC clusters are given below.

Gadi (NCI Australia)

The recommended build procedure on Gadi is:

source scripts/hpc_profiles/gadi_hopper.profile

cmake -B build -S . -DCMAKE_BUILD_TYPE=Release -DAMReX_GPU_BACKEND=CUDA -DAMReX_SPACEDIM=3

cmake --build build -j 8 --target HydroBlast3D

Then a single-node test job can be run with:

qsub scripts/pbs/gpuhopper-1node.pbs

You can replace HydroBlast3D with the name of the problem you want to compile.

Using VisIt

You can use VisIt in client/server mode with the following server-side patch for the launcher script.

A host file is provided here. You must change the username, project code, and server-side VisIt path.

Setonix (Pawsey)

The recommended build procedure on Setonix is:

source scripts/hpc_profiles/setonix-gpu.profile

cmake -B build -S . -DCMAKE_BUILD_TYPE=Release -DAMReX_GPU_BACKEND=HIP -DAMReX_SPACEDIM=3 -DHDF5_ROOT="$PAWSEY_HDF5_HOME"

cmake --build build -j 32 --target HydroBlast3D

Then a single-node test job can be run with:

sbatch scripts/slurm/setonix-1node.submit

You can replace HydroBlast3D with the name of the problem you want to compile.

Workaround for interconnect issues

If interconnect issues are observed, it is recommended to add the line :

export FI_CXI_RX_MATCH_MODE=software

to your job scripts.

Adaptive Mesh Refinement Guidelines

Quokka relies on the Berger-Collela AMR algorithm (Berger & Colella, 1989) provided by AMReX to create a hierarchy of nested grids. This page distills practical advice for planning and tuning AMR runs so the mesh tracks the physics you care about without wasting resolution elsewhere.

Understanding the Refinement Workflow

AMReX walks through a predictable sequence each time it builds or adjusts the mesh hierarchy. Cells are tagged inside ErrorEst, those tags are coarsened according to blocking_factor/ref_ratio, clustered by the Berger-Rigoutsos algorithm (Berger & Rigoutsos, 1991), and then promoted to new grids that honor blocking_factor and max_grid_size. The resulting hierarchy is averaged down so coarse levels remain consistent with fine ones. Keeping this loop in mind helps interpret why the mesh responds the way it does when the problem evolves or when you revisit your refinement logic.

In Quokka, AMReX still calls an ErrorEst function, but this is now a thin wrapper around refineGrid that also invokes refineGridsAroundParticles to tag cells surrounding particles. The user should therefore define refineGrid instead of ErrorEst; the wrapper will automatically call refineGrid and then add particle-based tags on top.

Setting AMR Parameters

Several inputs shape how aggressively the hierarchy responds to refinement tags:

| Parameter | Description | Typical Values | Performance Impact |

|---|---|---|---|

blocking_factor | Minimum grid dimension; grids must be integer multiples of this value | 8-32 | Large values improve GPU throughput but can force broad refinement |

grid_efficiency | Minimum fraction of tagged cells in each grid | 0.7-1.0 | Higher values reduce wasted work yet may spawn extra boxes |

max_grid_size | Maximum dimension of a grid patch | 64-128 | Smaller sizes improve load balance; larger sizes cut communication |

ref_ratio | Refinement ratio between adjacent levels | 2 or 4 | Larger ratios sharpen features faster but deepen the hierarchy |

Choose these controls as a set: a generous blocking_factor demands tighter tagging or higher grid_efficiency to avoid carpet refinement, while a smaller blocking factor allows surgical meshes but can stress GPUs. When in doubt, run short experiments that sweep one parameter at a time and compare the resulting plotfile headers to see how many grids are created per level.

Performance and Scaling Considerations

GPU runs typically favor blocking_factor ≥ 32 so each patch keeps SMs busy; values below 32 almost always break memory coalescing across a warp and leave expensive kernels underutilized even when occupancy looks fine. CPU multigrid solves prefer at least 8. If you push those limits, guard your memory footprint by trimming the number of refinement levels or enlarging max_grid_size so patches do not proliferate. Also watch how frequently you trigger regrid calls—rapid regridding magnifies the cost of tag evaluation and repartitioning, so coarse control logic or hysteresis inside refineGrid can pay dividends on very dynamic problems.

Observing and Adjusting the Hierarchy

Inspect the grid structure early in a run by opening the plotfile header or by enabling verbose regridding logs in AMReX. When the layout diverges from expectations, compare the tagged volume to the resulting grid inventory—discrepancies usually trace back to either tag dilution during coarsening or to efficiency limits that extend each patch. The spherical collapse study documented in GitHub issue #978 is a useful reminder that large blocking factors can still trigger whole-domain refinement if tags are sparse, so monitor that scenario whenever you upscale a production run.

Inspecting grids with amrex_fboxinfo

AMReX ships a lightweight reporter named amrex_fboxinfo that prints the box layout stored in a plotfile. Make sure your CMake cache was configured with -DAMReX_PLOTFILE_TOOLS=ON, then build the utility once per build tree with cmake --build build --target fboxinfo. The resulting executable lives at build/extern/amrex/Tools/Plotfile/amrex_fboxinfo. Point it at any plotfile to see how much of the domain each AMR level occupies and how many boxes AMReX created:

$ build/extern/amrex/Tools/Plotfile/amrex_fboxinfo plt00040

plotfile: plt00040

level 0: number of boxes = 1, volume = 100.00%, number of cells = 16384

maximum zones = 128 x 128

level 1: number of boxes = 4, volume = 12.50%, number of cells = 8192

maximum zones = 256 x 256

The summary lines are the quickest health check: volume reports the percentage of the level-ℓ domain covered by refined grids, so values creeping toward 100% warn that your refinement criteria are effectively forcing a uniform mesh. The maximum zones line prints the physical extents of each level’s valid domain; large jumps confirm that refinement ratios and blocking factors coarsen or refine by the factors you expect. Add --full to list every box and verify the coordinates land on multiples of blocking_factor, or --gridfile to emit a grid-description file for AMReX regression tools. When you only need the number of active refinement levels (for log parsing, for example), pass --levels to suppress the rest of the report.

Example: Berger-Rigoutsos in Practice

This figure shows an illustrative 2D blast-wave tagging pattern. The setup uses amr.n_cell = 128 128 4, amr.ref_ratio = 2 2 1, amr.blocking_factor = 32 32 4, amr.grid_eff = 0.8, and amr.n_error_buf = 1; in a 2D build the third entry in each list is ignored, so the effective blocking factor is 32. A pressure-gradient trigger in refineGrid tags a thin ring around the blast front. AMReX coarsens those tags by 16 cells before clustering, so the Berger-Rigoutsos pass finds four rectangles that tile the annulus: two long bands (lo, hi) = (64,96)→(191,127) and (64,128)→(191,159) plus two shorter bridges (96,64)→(159,95) and (96,160)→(159,191). Running amrex_fboxinfo --full plt00040 on the resulting plotfile shows the same coordinates, confirming that the level-one patches are multiples of 32 cells in each direction and fully envelop the tagged region after refinement. Comparing those boxes with the tags in the plotfile, as sketched above, is a quick way to verify that the clustering step is behaving the way you expect.

Troubleshooting Patterns

Start with the simplest levers. Reduce blocking_factor temporarily to verify the tagging logic, then restore the larger production value once you confirm the tags are reasonable. Next, nudge grid_efficiency upward until the refined region stabilizes without spawning many more boxes. If the hierarchy thrashes between steps, consider caching diagnostic arrays that record why cells were tagged; a quick visualization of those masks often pinpoints whether gradients, thresholds, or boundary effects are misbehaving.

Further Reading

For deeper dives into refinement theory and AMReX implementation details, consult the original Berger & Colella paper (Berger & Colella, 1989), the Berger-Rigoutsos clustering method (Berger & Rigoutsos, 1991), and the AMReX-Astro Castro regridding notes. The AMReX source documentation also clarifies lower-level options that Quokka exposes through its input files.

Test problems

Click here to view a table of all test problems in the Quokka codebase.

Listed here are detailed descriptions of some of the test problems. This list is still under construction.

- A table of all test problems

- Radiative shock test

- Shu-Osher shock test

- Slow-moving shock test

- Matter-radiation temperature equilibrium test

- Uniform advecting radiation in diffusive limit

- Advecting radiation pulse test

Table of all test problems

This table lists all test problems in the Quokka codebase. The acronyms used are as follows:

- SG: Single-group radiation

- MG: Multi-group radiation

- ThermalDust: Dust thermally coupled to the gas

- PE: Photoelectric heating

- CIC: Cloud-in-cell particles

| Problem | DIM | Hydro | MHD | Rad | Gravity | Particles | PassiveScalars |

|---|---|---|---|---|---|---|---|

| Advection | 1 | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ |

| AdvectionSemiellipse | 1 | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ |

| AlfvenWaveCircular | 3 | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| AlfvenWaveLinear | 3 | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| BinaryOrbitCIC | 3 | ✅ | ❌ | ❌ | ✅ | CIC | ❌ |

| BrioWuShockTube | 3 | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| CurrentSheet | 3 | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| DiskGalaxy | 3 | ✅ | ❌ | ❌ | ✅ | CIC, StochasticStellarPop | ❌ |

| FCQuantities | 3 | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| FastWave | 3 | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| FieldLoop | 3 | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| GravRadParticle3D | 3 | ❌ | ❌ | SG | ✅ | CIC, Rad, CICRad | ❌ |

| HydroBlast3D | 3 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| HydroContact | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | 2 |

| HydroHighMach | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| HydroLeblanc | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| HydroQuirk | 2 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| HydroSMS | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| HydroShocktube | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| HydroShocktubeCMA | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | 3 |

| HydroShuOsher | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| HydroVacuum | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| HydroWave | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| HydroWaveConvergence | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| MHDBlast | 3 | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| MHDQuirk | 3 | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| NscbcChannel | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | 1 |

| NscbcVortex | 2 | ✅ | ❌ | ❌ | ❌ | ❌ | 1 |

| ODEIntegration | 1 | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ |

| OrszagTang | 3 | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| ParticleAccretion | 3 | ✅ | ❌ | ❌ | ✅ | Sink | ❌ |

| ParticleCreation | 3 | ✅ | ❌ | ❌ | ✅ | Test | ❌ |

| ParticleRadiation | 3 | ✅ | ❌ | MG | ❌ | StochasticStellarPop | ❌ |

| ParticleSF | 3 | ✅ | ❌ | ❌ | ❌ | StochasticStellarPop | ❌ |

| ParticleSink | 3 | ✅ | ❌ | ❌ | ✅ | Sink | ❌ |

| ParticleSinkFormation | 3 | ✅ | ❌ | ❌ | ✅ | Sink | ❌ |

| PassiveScalar | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | 1 |

| PopIII | 3 | ✅ | ❌ | ❌ | ✅ | ❌ | ❌ |

| PrimordialChem | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| RadDust | 1 | ✅ | ❌ | SG+ThermalDust | ❌ | ❌ | ❌ |

| RadDustMG | 1 | ✅ | ❌ | MG+ThermalDust | ❌ | ❌ | ❌ |

| RadForce | 1 | ✅ | ❌ | SG | ❌ | ❌ | ❌ |

| RadLineCooling | 1 | ✅ | ❌ | SG+ThermalDust | ❌ | ❌ | ❌ |

| RadLineCoolingMG | 1 | ✅ | ❌ | MG+ThermalDust+PE | ❌ | ❌ | ❌ |

| RadMarshak | 1 | ❌ | ❌ | SG | ❌ | ❌ | ❌ |

| RadMarshakAsymptotic | 1 | ❌ | ❌ | SG | ❌ | ❌ | ❌ |

| RadMarshakCGS | 1 | ❌ | ❌ | SG | ❌ | ❌ | ❌ |

| RadMarshakDust | 1 | ❌ | ❌ | MG+ThermalDust | ❌ | ❌ | ❌ |

| RadMarshakDustPE | 1 | ❌ | ❌ | MG+ThermalDust+PE | ❌ | ❌ | ❌ |

| RadMarshakVaytet | 1 | ❌ | ❌ | MG | ❌ | ❌ | ❌ |

| RadMatterCoupling | 1 | ❌ | ❌ | SG | ❌ | ❌ | ❌ |

| RadMatterCouplingRSLA | 1 | ❌ | ❌ | SG | ❌ | ❌ | ❌ |

| RadStreaming | 1 | ❌ | ❌ | SG | ❌ | ❌ | ❌ |

| RadStreamingY | 2 | ❌ | ❌ | SG | ❌ | ❌ | ❌ |

| RadSuOlson | 1 | ❌ | ❌ | SG | ❌ | ❌ | ❌ |

| RadTube | 1 | ✅ | ❌ | MG | ❌ | ❌ | ❌ |

| RadhydroBB | 1 | ✅ | ❌ | MG | ❌ | ❌ | ❌ |

| RadhydroPulse | 1 | ✅ | ❌ | SG | ❌ | ❌ | ❌ |

| RadhydroPulseDyn | 1 | ✅ | ❌ | SG | ❌ | ❌ | ❌ |

| RadhydroPulseGrey | 1 | ✅ | ❌ | SG | ❌ | ❌ | ❌ |

| RadhydroPulseMGconst | 1 | ✅ | ❌ | MG | ❌ | ❌ | ❌ |

| RadhydroPulseMGint | 1 | ✅ | ❌ | MG | ❌ | ❌ | ❌ |

| RadhydroShell | 3 | ✅ | ❌ | SG | ❌ | ❌ | ❌ |

| RadhydroShock | 1 | ✅ | ❌ | SG | ❌ | ❌ | ❌ |

| RadhydroShockCGS | 1 | ✅ | ❌ | SG | ❌ | ❌ | ❌ |

| RadhydroShockMultigroup | 1 | ✅ | ❌ | MG | ❌ | ❌ | ❌ |

| RadhydroUniformAdvecting | 1 | ✅ | ❌ | SG | ❌ | ❌ | ❌ |

| RandomBlast | 3 | ✅ | ❌ | ❌ | ✅ | ❌ | 1 |

| RayleighTaylor3D | 3 | ✅ | ❌ | ❌ | ❌ | ❌ | 1 |

| ResampledCoolingTest | 1 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| SN | 3 | ✅ | ❌ | ❌ | ❌ | Test | ❌ |

| ShockCloud | 3 | ✅ | ❌ | ❌ | ❌ | ❌ | 3 |

| SphericalCollapse | 3 | ✅ | ❌ | ❌ | ✅ | CIC | ❌ |

| StarCluster | 3 | ✅ | ❌ | ❌ | ✅ | ❌ | ❌ |

| Turbulence | 3 | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

Radiative shock test

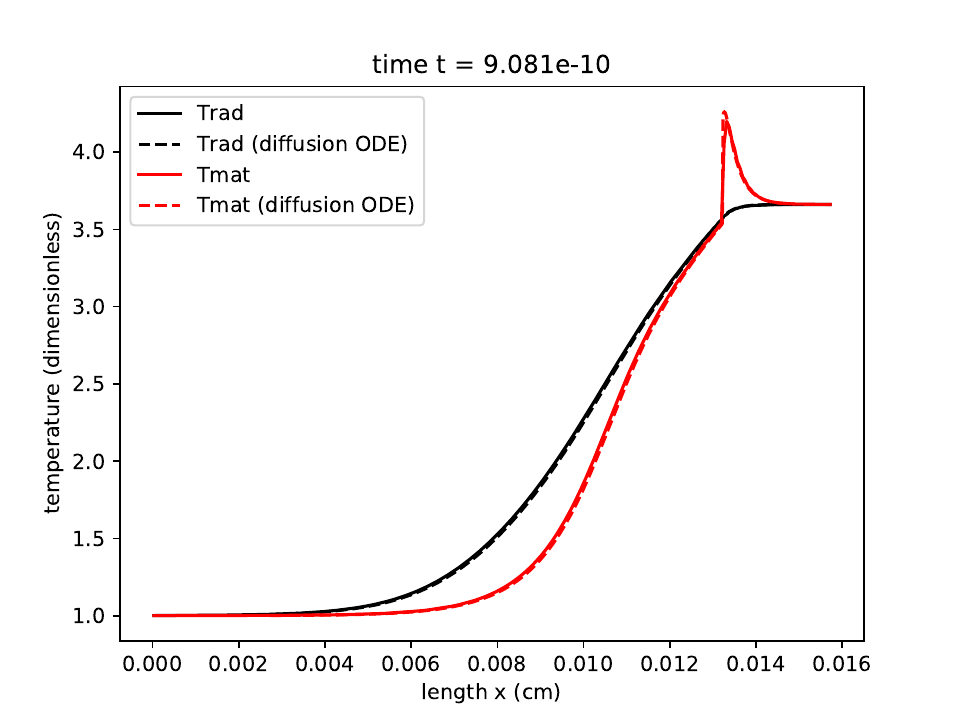

This test problem demonstrates the correct coupled solution of the hydrodynamics and radiation moment equations for a subcritical radiative shock. The steady-state solution in the nonequilibrium radiation diffusion approximation is given by a set of coupled ODEs that can be solved to arbitrary precision following the method of (Lowrie & Edwards, 2008).

Parameters

The dimensionless shock parameters (Lowrie & Edwards, 2008) are:

Following (Skinner et al., 2019), we scale to dimensional values assuming

and obtain the following pre-shock and post-shock states:

We adopt a reduced speed of light (as used in (Skinner et al., 2019))

Solution

Since the solution is given assuming radiation diffusion, we set the Eddington factor (as used in the Riemann solver for the radiation moment equations) to a constant value of \(1/3\) everywhere.

We use the RK2 integrator with a CFL number of 0.2 and a mesh of 256 equally-spaced zones. After 3 shock crossing times, we obtain a solution for the radiation temperature and matter temperature that agrees to better than 0.5% (in relative L1 norm) with the steady-state ODE solution to the radiation hydrodynamics equations:

The radiation temperature is shown in the black solid and dashed lines, with the dashed line showing the semi-analytic solution. The material temperature is shown in the red lines, with the semi-analytic solution shown with the dashed line.

The radiation temperature is shown in the black solid and dashed lines, with the dashed line showing the semi-analytic solution. The material temperature is shown in the red lines, with the semi-analytic solution shown with the dashed line.

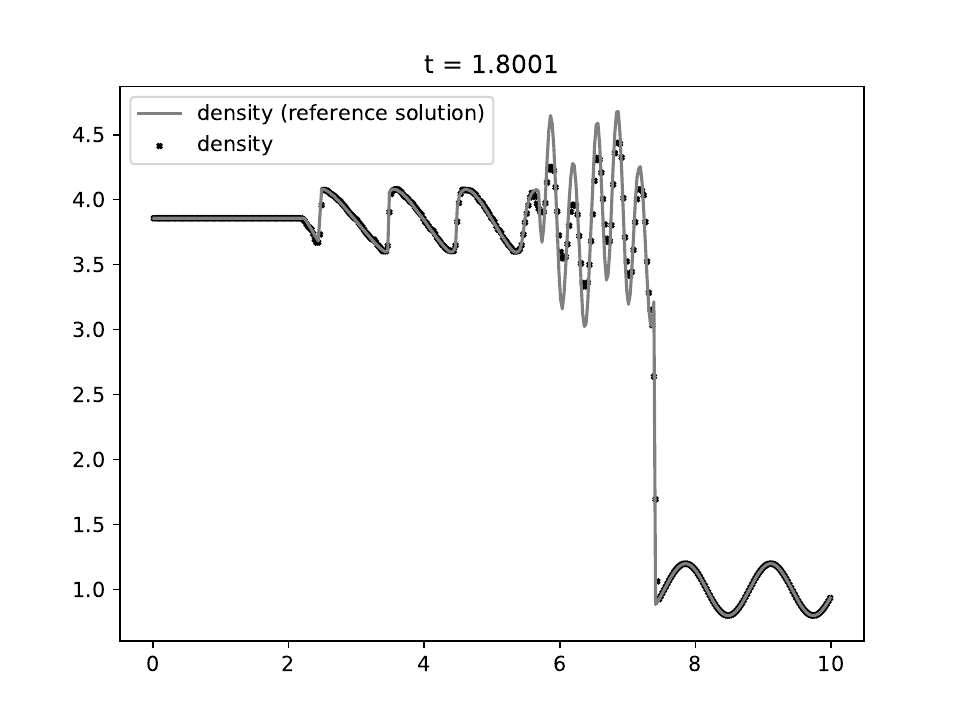

Shu-Osher shock test

This test problem demonstrates the ability of the code to resolve fine features and shocks simultaneously. The resolution of our code is comparable to the ENO-RF-3 scheme shown in Figure 14b of (Shu & Osher, 1989).

Parameters

The left- (\(x < 1.0\)) and right-side (\(x \ge 1.0\)) initial conditions are:

Solution

We use the RK2 integrator with a fixed timestep of \(10^{-4}\) and a mesh of 400 equally-spaced zones, evolving until time \(t=1.8\). There are some subtle stair-step artifacts similar to those seen in the sawtooth linear advection test, but these converge away as the spatial resolution is increased.

These artefacts can be eliminated by projecting into characteristic waves and reconstructing the interface states in the characteristic variables, as done in §4 of (Shu & Osher, 1989). The reference solution is computed using Athena++ with PPM reconstruction in the characteristic variables on a grid of 1600 zones.

The density is shown as the solid blue line. There is no exact solution for this problem.

The density is shown as the solid blue line. There is no exact solution for this problem.

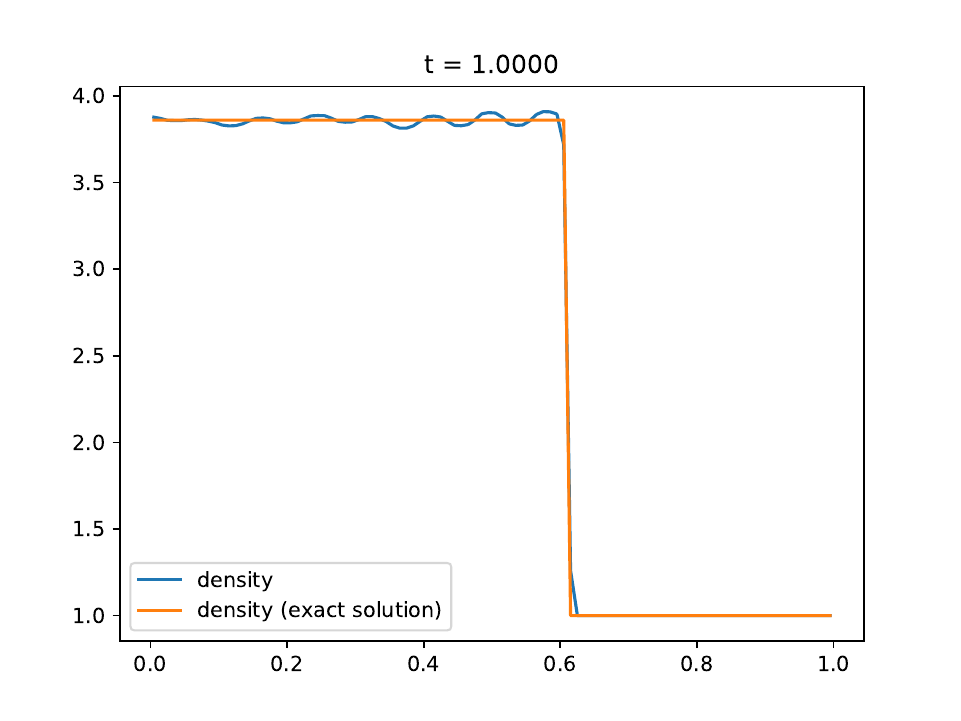

Slow-moving shock test

This test problem demonstrates the extent to which post-shock oscillations are controlled in slowly-moving shocks. This effect can be exhibited in all Godunov codes, even with first-order methods, for sufficiently slow-moving shocks across the computational grid (Jin & Liu, 1996).

The shock flattening method of (Colella & Woodward, 1984) (implemented in our code in modified form) reduces the oscillations, but does not completely suppress them. Adding artificial viscosity according to the method of (Colella & Woodward, 1984), even to the level of smoothing the contact discontinuity by 5-10 cells, does not cure the problem.

Parameters

The left- and right-side initial conditions are (Quirk, 1994):

The shock moves to the right with speed \(s = 0.1096\).

Solution

We use the RK2 integrator with a fixed timestep of \(10^{-3}\) and a mesh of 100 equally-spaced cells. The contact discontinuity is initially placed at \(x=0.5\).

The density is shown as the solid blue line. The exact solution is the solid orange line.

The density is shown as the solid blue line. The exact solution is the solid orange line.

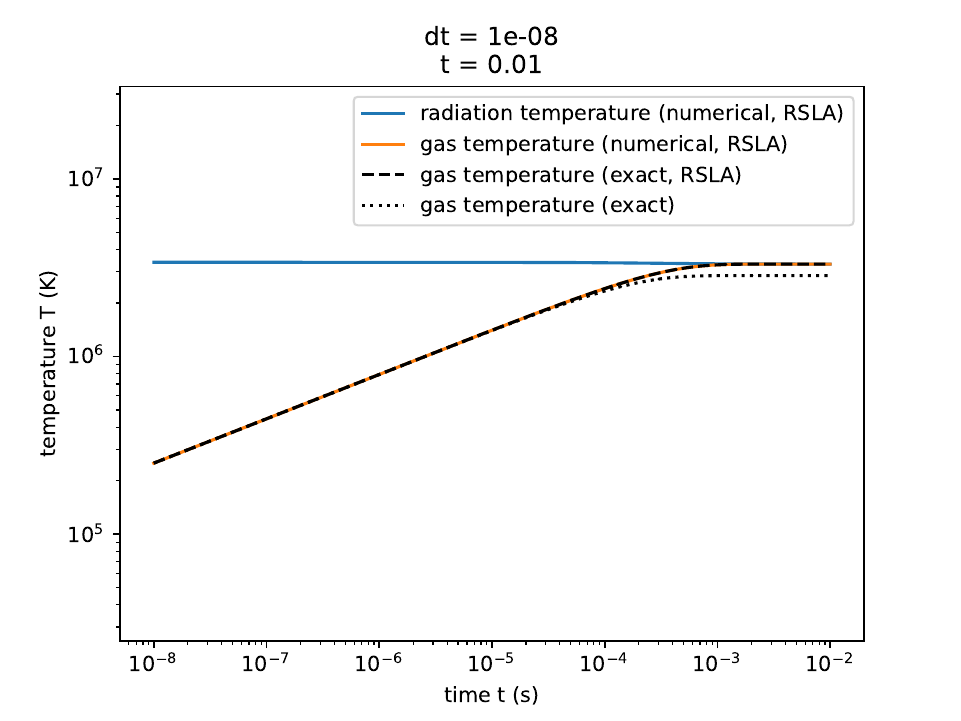

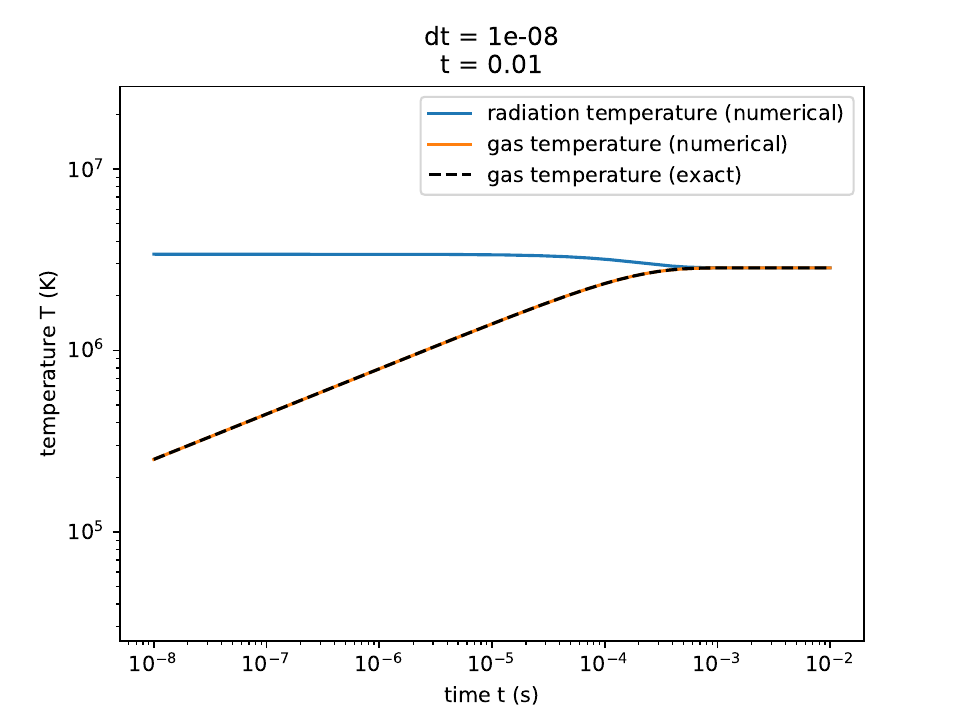

Matter-radiation temperature equilibrium test

This test problem demonstrates the correct coupled solution of the matter-radiation energy balance equations. We also demonstrate that the equilibrium temperature is incorrect in the reduced speed of light approximation.

Parameters

The initial energy densities are:

We assume a specific heat \(c_v = \alpha T^3\) which enables an analytic solution. We adopt a reduced speed of light with \(\hat c = 0.1 c\).

Solution

The exact time-dependent solution for the matter temperature \(T\) is:

We show the numerical results below:

The radiation temperature and matter temperatures in the reduced speed-of-light approximation, along with the exact solution for the matter temperature.

The radiation temperature and matter temperatures in the reduced speed-of-light approximation, along with the exact solution for the matter temperature.

The radiation temperature and matter temperatures, along with the exact solution for the matter temperature.

The radiation temperature and matter temperatures, along with the exact solution for the matter temperature.

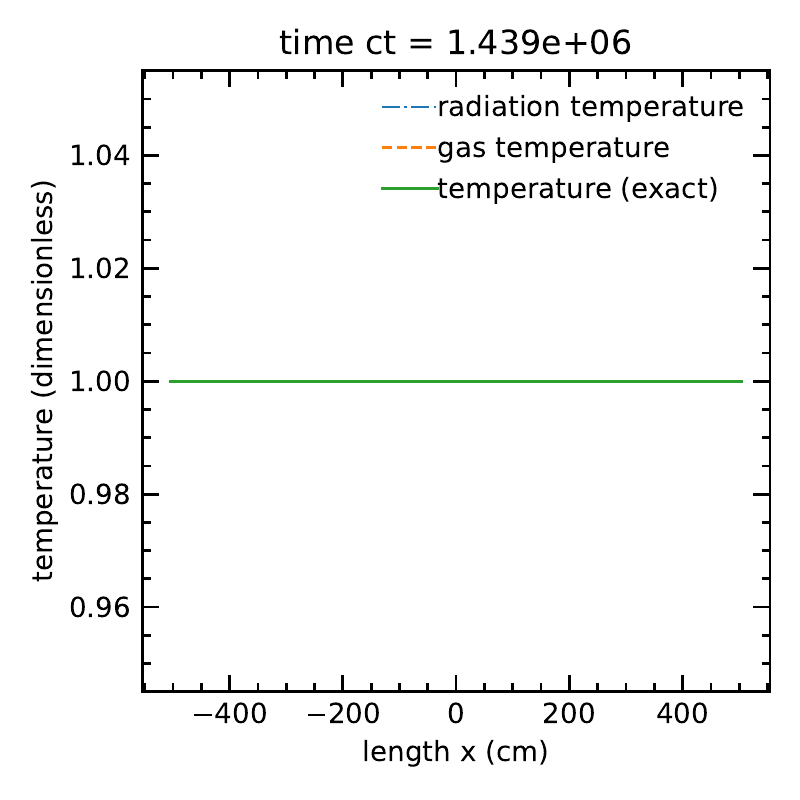

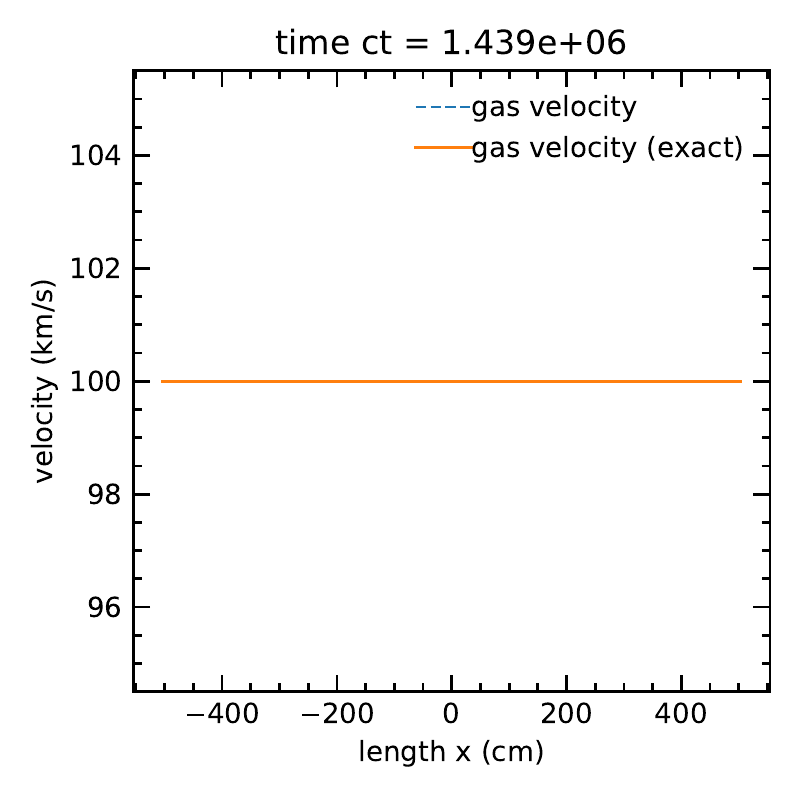

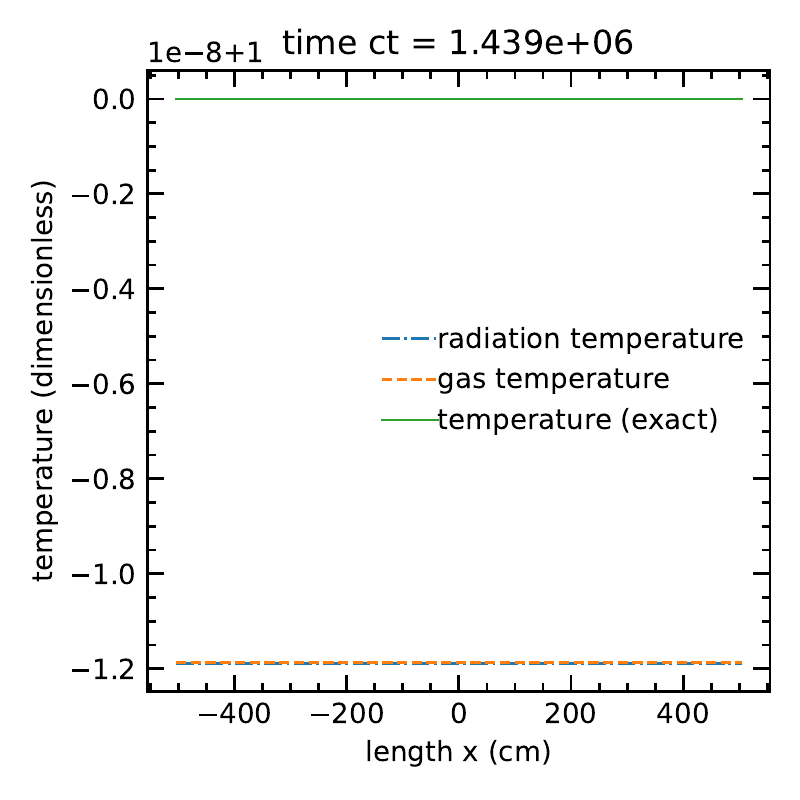

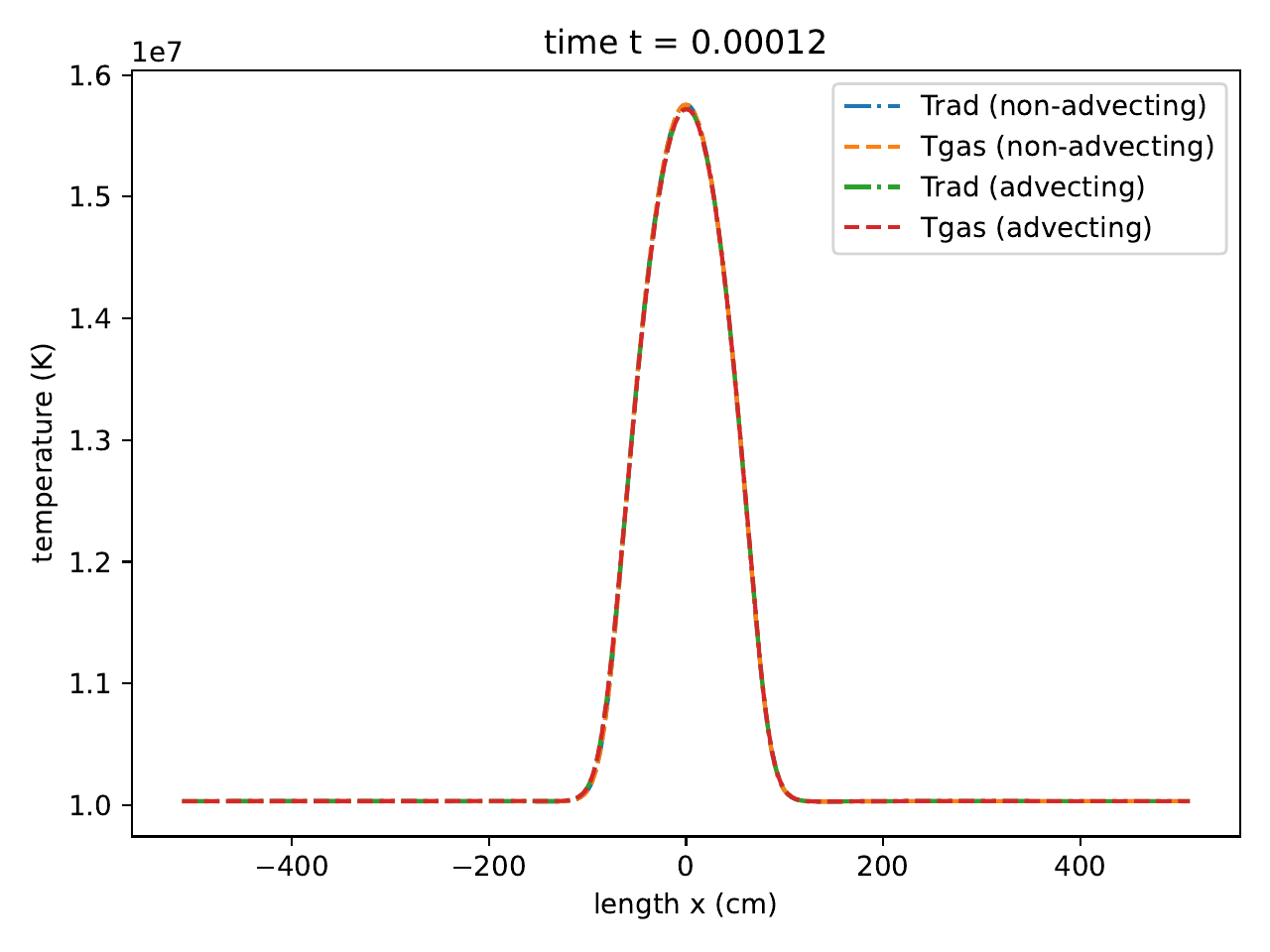

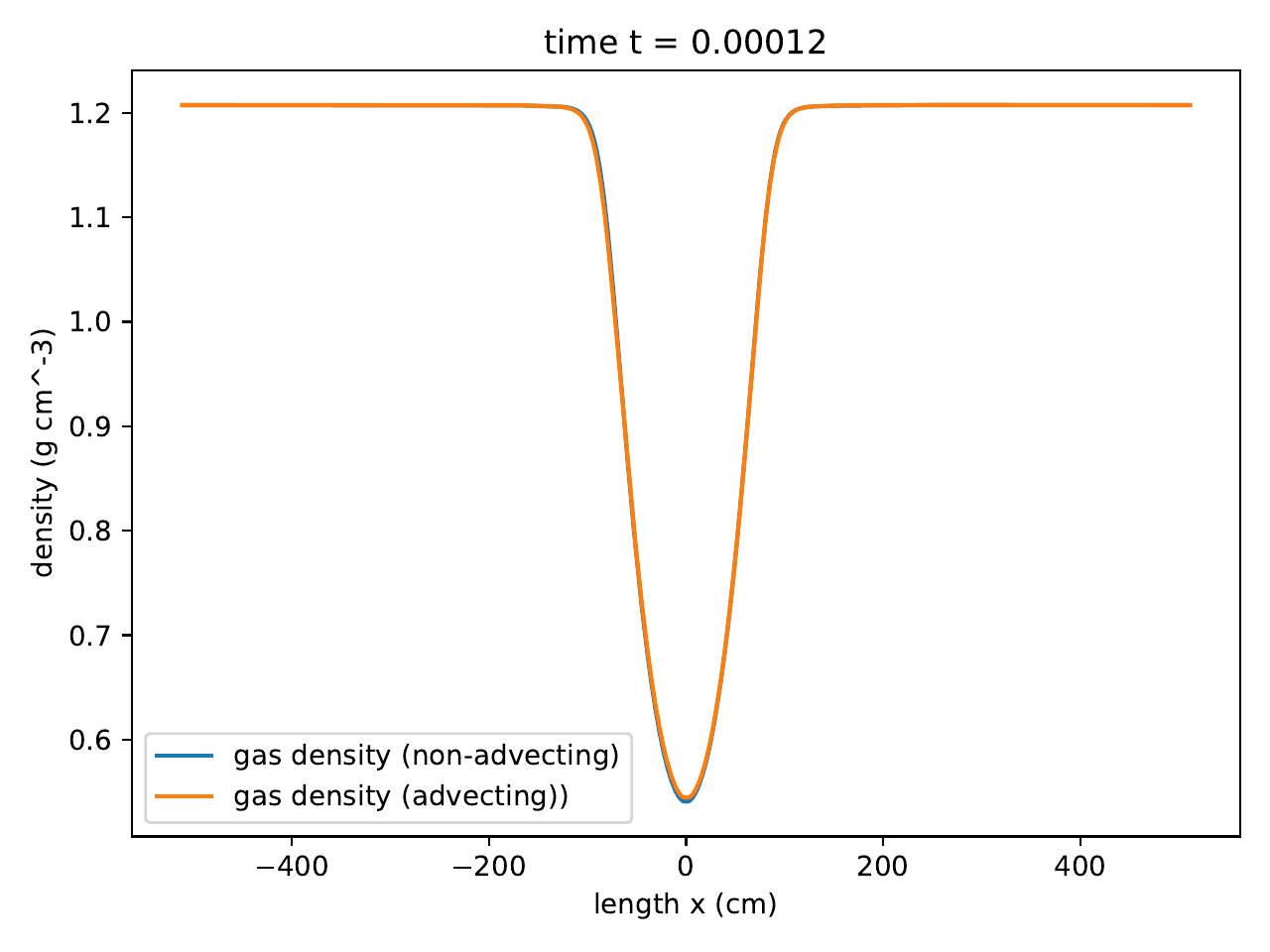

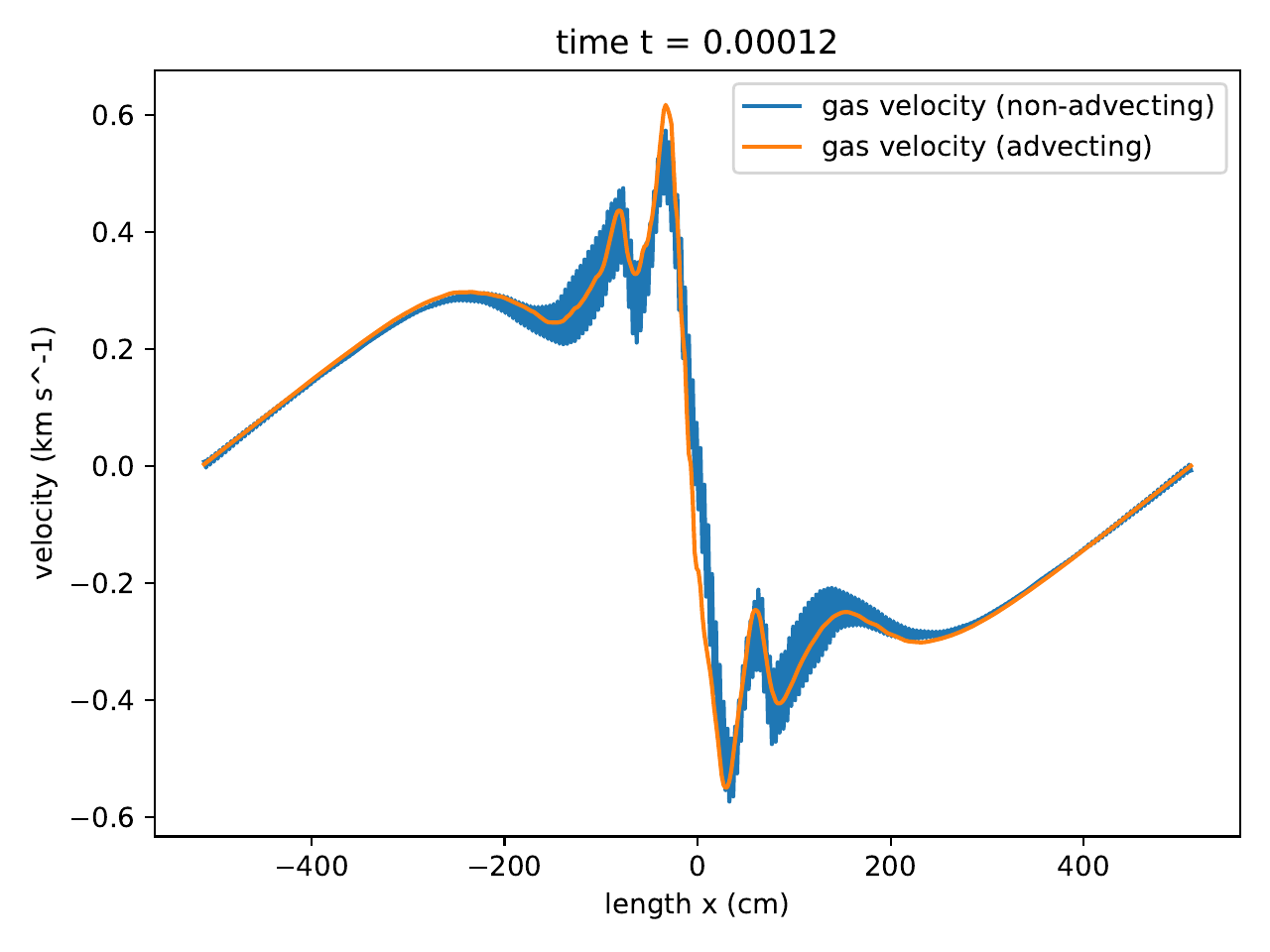

Uniform advecting radiation in diffusive limit

In this test, we simulation an advecting uniform gas where radiation and matter are in thermal equilibrium in the co-moving frame. Following the Lorentz tranform, the initial radiation energy and flux in the lab frame to first order in \(v/c\) are \(E_r = a_r T^4\) and \(F_r = \frac{4}{3} v E_r\).

Parameters

Results

With \(O(\beta \tau)\) terms:

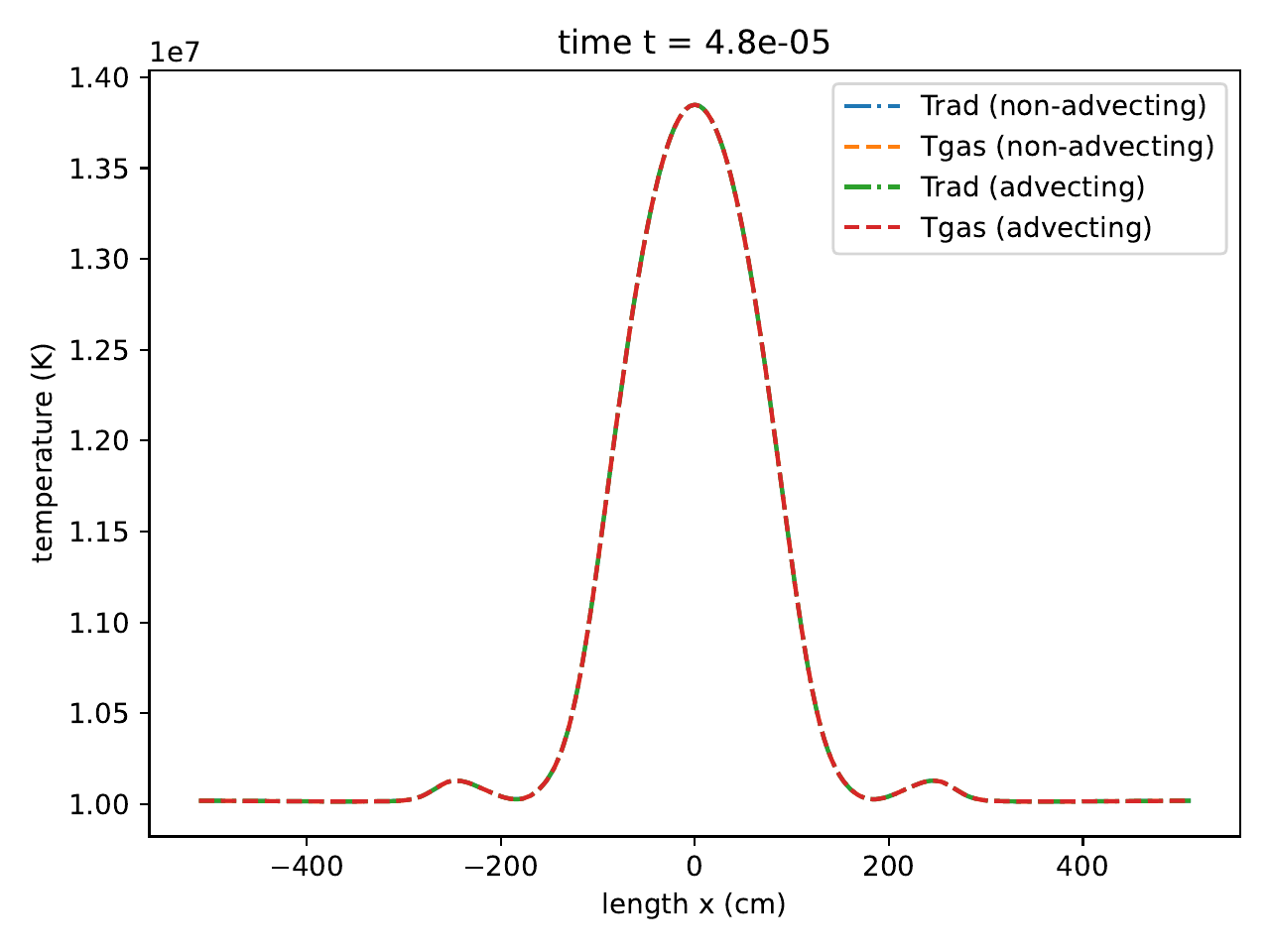

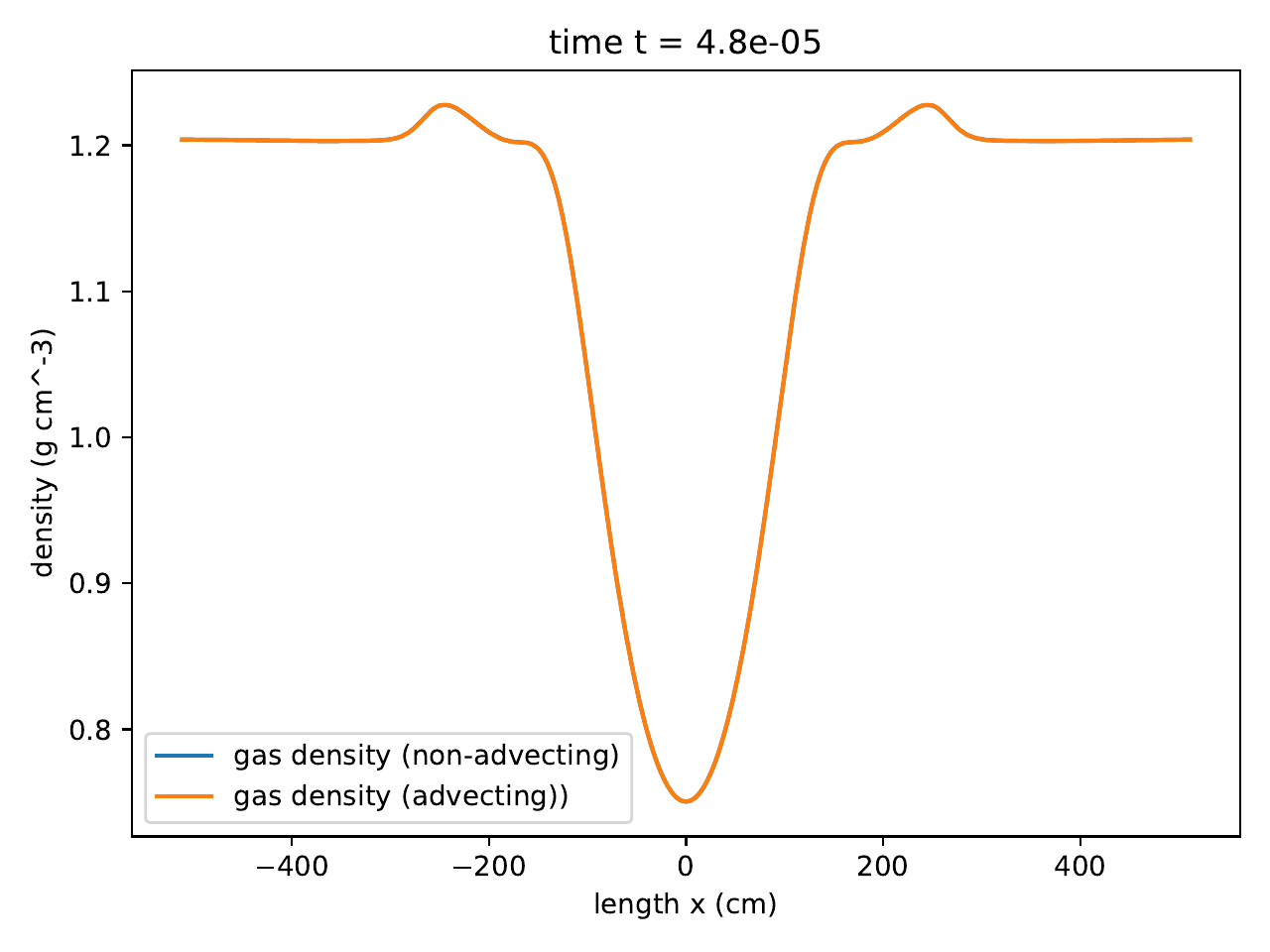

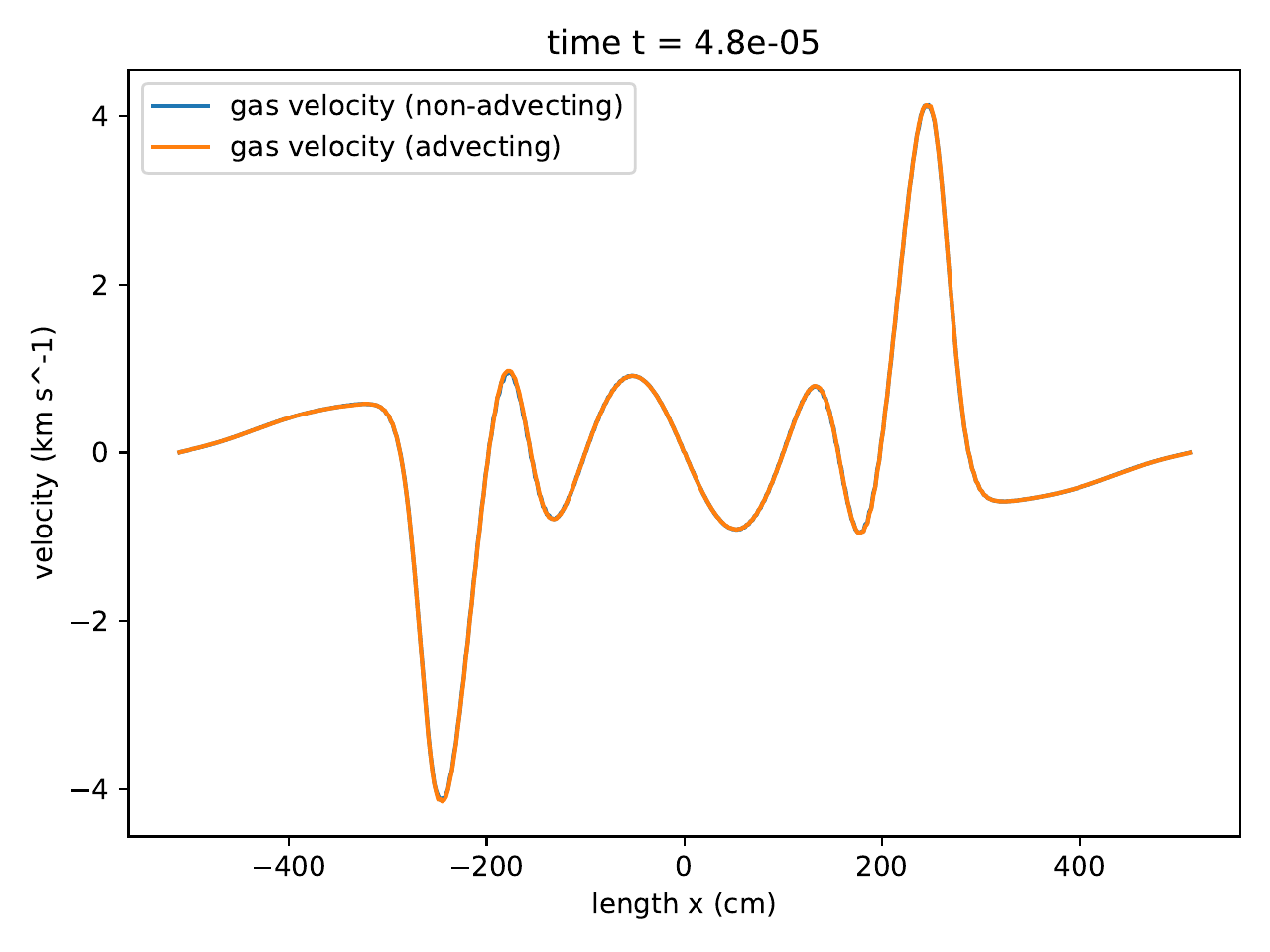

The radiation temperature and matter temperatures, along with the exact solution.

The radiation temperature and matter temperatures, along with the exact solution.

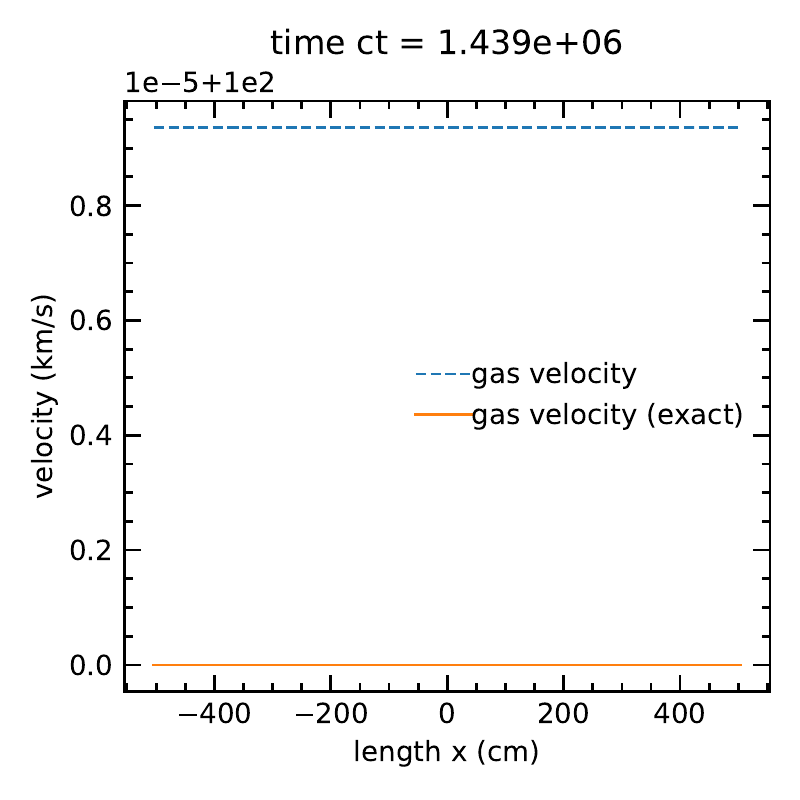

The matter velocity, along with the exact solution.

The matter velocity, along with the exact solution.

Without \(O(\beta \tau)\) terms:

The radiation temperature and matter temperatures, along with the exact solution.

The radiation temperature and matter temperatures, along with the exact solution.

The matter velocity, along with the exact solution.